AI Model Testing Protocols: Best Practices

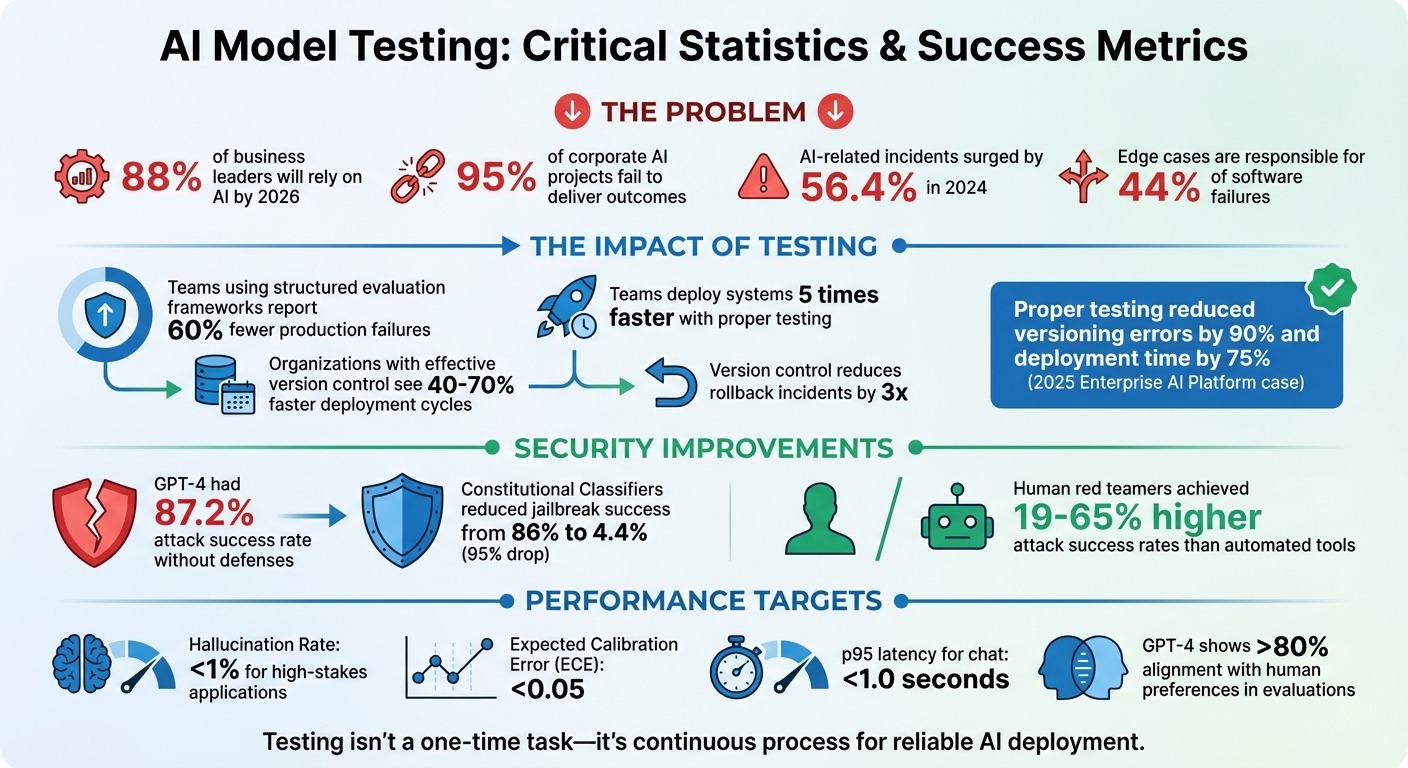

AI models fail differently from traditional software. Instead of crashing, they can confidently produce wrong results, making testing critical for reliable deployment. With 88% of business leaders relying on AI by 2026 but 95% of corporate AI projects failing to deliver outcomes, testing ensures accuracy, safety, and compliance.

Key Takeaways:

- Why Testing Matters: AI systems face unique risks like biased outputs, model drift, and adversarial attacks.

- Testing Steps: Define clear objectives, evaluate metrics like precision and recall, and document assumptions.

- Advanced Techniques: Use edge-case scenarios, synthetic data, and stress testing to uncover vulnerabilities.

- Post-Deployment Monitoring: Track data drift, audit logs, and integrate user feedback for continuous improvement.

- Security Focus: Protect against adversarial inputs and align with regulations like the EU AI Act.

Testing isn’t a one-time task - it’s a continuous process that ensures your AI systems remain reliable, secure, and effective.

AI Model Testing Statistics and Key Metrics for Reliable Deployment

How to Test AI Models: The 2 Methods That Actually Work

sbb-itb-903b5f2

Define Testing Objectives and Scope

Setting clear, measurable goals is crucial when testing. Vague targets like "the model should be helpful" don't cut it. Instead, focus on specific, trackable success criteria. For instance, you might require factually accurate summaries based on documented sources or error-free multi-leg flight bookings. This approach replaces subjective opinions with concrete benchmarks that you can measure and monitor.

Identify the Model's Purpose

Start by understanding the problem your model is designed to solve and who it serves. A model built for medical diagnostics, for example, will have vastly different requirements than one meant for entertainment recommendations. Factors like the domain, target audience, and intended functionality will shape every decision - from the data you collect to the risks you need to manage. A well-known example is Amazon's 2018 recruitment tool, which failed because it relied on gender-biased training data, ultimately penalizing resumes that mentioned "women's chess club captain".

Set Evaluation Metrics

Choose metrics that align with the real-world impact of mistakes in your system. In fraud detection, precision is key because false positives can create costly disruptions for legitimate users. In medical screening, recall (or sensitivity) takes priority since missing a diagnosis can have serious consequences. For search and recommendation systems, metrics like Precision@K or NDCG are useful for assessing how well the ranked results meet user expectations. If you're working on a Retrieval-Augmented Generation (RAG) system, focus on metrics like groundedness and context recall to ensure the model's responses stick to the provided data. Teams that use structured evaluation frameworks report up to 60% fewer production failures and deploy systems five times faster. Once your metrics are in place, make sure to document any assumptions or limitations.

Document Assumptions and Limitations

Transparency is non-negotiable. Clearly outline potential sources of variability, such as reliance on training data, the "black box" nature of deep learning models, and the risk of model drift - where accuracy declines as real-world data evolves over time. Be explicit about what your model can and cannot do. This level of detail is critical for meeting regulatory requirements like GDPR and the EU AI Act, and it helps avoid "specification failures" where unclear requirements lead to misaligned tasks.

"Security is not sufficient, AI Trustworthiness is the real objective" - OWASP Foundation

Design and Organize Test Cases

Once you've outlined your objectives, the next step is to build a well-structured library of test cases that covers both routine operations and worst-case scenarios. Think of this as setting up a safety net to catch potential issues before they impact your users. The goal here is simple: systematically minimize the chances of your AI behaving unpredictably in production. These test cases will serve as the foundation for all future evaluation efforts.

Create Core Functionality Test Cases

Begin by creating a "Golden Dataset" - a static, high-quality collection of test cases that represent your model's primary capabilities and typical usage scenarios (often referred to as "happy path" scenarios). The idea isn’t to test every possible input but to confirm that the basic logic and functionality remain intact. For instance, you can use basic sanity tests to ensure that API endpoints return predictions in the correct format and reject invalid inputs, like unsupported file types.

"Data is the software of AI; we need to test it as much as we test code." - Citrusbug Technolas

When testing generative models, prioritize pairwise comparisons - evaluating which of two outputs is better - over open-ended generation. Large language models (LLMs) tend to excel at comparing outputs rather than creating entirely new ones. To ensure automated scoring aligns with user expectations, calibrate it with human judgment. For example, GPT-4 has demonstrated over 80% alignment with human preferences when evaluating outputs, making it a reliable tool for such tasks.

Develop Edge-Case Scenarios

Edge cases are responsible for 44% of software failures, so they deserve special attention. These scenarios can be identified through methods like boundary value analysis (testing the extremes of input ranges), reviewing historical data for past failures, and hypothesizing potential failure modes based on external factors. For AI models, this includes testing inputs with typos, outliers, contradictory instructions, or excessively long text.

Use property testing to define universal rules - such as "the model must always return valid JSON" - and then attempt to break those rules. Incorporate fuzz testing by feeding the model invalid or random data to expose unhandled exceptions. Additionally, persona audits can help uncover biases by testing the same prompts across different demographic indicators. Once these edge cases are addressed, you can broaden your testing efforts with synthetic data and stress tests to simulate extreme conditions.

Add Synthetic and Stress Testing

Synthetic data generation allows you to scale your testing far beyond the limits of real-world data. Start with a seed dataset of high-quality examples and use data synthesis tools to create thousands of variations that cover rare or missing edge cases. For text models, techniques like synonym replacement and varying word counts can help test robustness. For vision models, adding noise or applying random cropping can achieve similar results.

Ensure lexical diversity by varying vocabulary and query structures, and aim for semantic diversity by including sensitive topics or global contexts. For Retrieval-Augmented Generation (RAG) systems, generate input-output pairs directly from your knowledge base to verify that the model can accurately retrieve and present information based on specific source facts. During stress testing, monitor factors like inference latency and resource usage to identify potential bottlenecks.

"AI fails differently. Traditional software crashes. AI gives wrong answers that look right." - Olexandra Baglai, Testfort

Validate Technical Performance

After creating test cases, the next step is to evaluate how your model performs under varying data conditions. This means checking whether the model behaves consistently when exposed to real-world variations, diverse subgroups of data, and repeated runs. The goal here is to ensure the AI remains dependable under pressure.

"Generative AI is variable. Models sometimes produce different output from the same input, which makes traditional software testing methods insufficient for AI architectures." - OpenAI

Run Slice-Based Evaluations

To refine your evaluation process, break down your model's performance into specific data slices. These slices might include areas like intent understanding, retrieval accuracy, reasoning quality, formatting consistency, and safety compliance. This approach helps pinpoint weaknesses that overall accuracy metrics might miss. For example, Macro-F1 is a useful metric for assessing performance on smaller, less-represented groups that simple accuracy might overlook.

Another helpful tool is persona audits. These audits test for relational biases by running identical prompts but altering demographic indicators like names, ages, genders, or ethnicities while keeping the context consistent. If these changes affect the model's tone, information density, or decision-making, it could highlight biases that need to be addressed.

Perform Perturbation Testing

Stress testing is a great way to understand how robust your model is. By tweaking inputs, you can uncover vulnerabilities in real-world scenarios. For instance, introduce typos, misspellings, synonyms, slang, or noise to see how the model handles imperfections. An example might be swapping "physician" for "doctor" or adding common spelling errors to test its adaptability. Similarly, you can test for demographic consistency by changing names like "John" to "Jamal" and observing any shifts in responses.

You can also stress test with constraint collisions - for example, giving conflicting instructions like "be extremely detailed but answer in one sentence" - to see if the model recognizes and resolves the conflict. Another method is using chained commands, such as asking the model to "summarize this article, then turn it into a poem, then end with a joke", to identify where it might falter in following complex instructions. These tests help expose vulnerabilities that might not be apparent in standard evaluations.

"Adversarial testing involves proactively trying to 'break' an application by providing it with data most likely to elicit problematic output." - Google Developers

Measure Stability and Consistency

Since AI models can sometimes produce varying outputs from the same input, it's important to run multiple evaluations and analyze the distribution of metrics like the Consistency Score and Task Success Rate. For high-stakes applications, aim to keep the Hallucination Rate below 1% and the Expected Calibration Error (ECE) under 0.05.

To reduce random variability, set your model's temperature to 0 (or within 0–0.2) during validation. Also, freeze model versions and prompts to ensure results are reproducible. Monitor paired metrics - such as helpfulness versus refusal rate or recall versus precision - to avoid "metric gaming", where improving one metric comes at the expense of another. Additionally, keep an eye on p95 and p99 latency to ensure the model operates efficiently; for chat applications, p95 latency should stay under 1.0 seconds. For instance, optimizing retrieval parameters in one case reduced hallucinations to under 1% and brought latency below 1.0 seconds, which led to a 28% drop in escalations.

Test for Security and Safety

Once technical performance is validated, the focus shifts to safeguarding the model against malicious inputs in real-world situations. Unlike traditional software testing, AI security testing involves addressing unique vulnerabilities such as adversarial prompts and system-level exploits that don't typically exist in conventional applications.

"Human judgment remains essential for prioritizing risks, designing system-level attacks, and assessing nuanced harms." - Microsoft AI Red Team

Conduct Adversarial Testing

The first step is identifying all potential entry points for malicious inputs. These could include user prompts, system prompts, file uploads, retrieval-augmented generation (RAG) data sources, or API parameters. Frameworks like the OWASP LLM Top 10 provide a systematic approach to identifying vulnerabilities such as prompt injection, data leaks, and unauthorized access.

For context, earlier models like GPT-4 exhibited an 87.2% attack success rate when defenses weren’t in place. However, the introduction of Constitutional Classifiers dramatically reduced jailbreak success rates from 86% to 4.4%, representing a 95% drop in vulnerabilities. Interestingly, human red teamers outperformed automated tools, achieving attack success rates 19% to 65% higher, underscoring the need for both human expertise and automated testing.

To bolster security, use hybrid red teaming. This method combines human creativity for uncovering new attack vectors with AI’s ability to scale execution. Focus on system-level vulnerabilities, as simple exploits targeting system components are often more effective than intricate model-level attacks. These insights can then inform domain-specific safety evaluations tailored to the model's intended use.

Evaluate Safety in Domain-Specific Applications

Generic benchmarks often fail to capture risks unique to specific domains. For instance, a medical AI must be validated against clinical guidelines, while a financial chatbot needs safeguards to prevent unauthorized investment advice. Testing resources should be allocated based on the potential cost of failure. For example, Tier 1 (High-Risk) applications like legal or healthcare AI demand extensive human-in-the-loop adversarial testing.

Incorporate a dual-review framework for high-stakes applications. Here, a "Supervisor" agent evaluates the primary model's output against trusted, domain-specific databases to identify hallucinations or inaccuracies. For critical applications like hiring or loan approvals, perform thorough persona audits to address risks specific to each domain.

Testing should also align with regulatory standards. For instance, the EU AI Act mandates pre-release red teaming for systemic AI models by August 2025, with penalties reaching $15M or 3% of revenue for non-compliance. Similarly, the Colorado AI Act offers safe harbor for organizations adhering to the NIST AI Risk Management Framework starting February 2026. These practices not only enhance safety but also ensure compliance with evolving regulations.

Document Risks and Mitigation Strategies

Maintain a risk register to track vulnerabilities systematically. Each entry should include a Risk ID, system name, category (e.g., Bias, Security, Performance), description, and an assigned owner. Use tools like a 1–5 Likelihood/Impact matrix or the Common Vulnerability Scoring System (CVSS) to rank threats by severity.

For each vulnerability, define a treatment strategy:

- Avoid: Eliminate the risky feature.

- Mitigate: Introduce controls to reduce risk.

- Transfer: Use insurance or contracts to shift risk.

- Accept: Monitor within acceptable risk levels.

Quantify both Inherent Risk (before controls) and Residual Risk (after mitigation).

"Use a structured ontology to model attacks including adversarial actors, TTPs (Tactics, Techniques, and Procedures), system weaknesses, and downstream impacts. This enables systematic tracking and improvement." - Microsoft AI Red Team

When documenting AI failures, include the input, output, expected response, and supporting contextual evidence. Screenshots and logs are crucial, as AI failures often hinge on context - sometimes a single word can alter the outcome. Categorize issues with clear labels like "Prompt Injection", "Factual Hallucination", "Tone Drift", or "Data Leakage" to streamline prioritization and resolution. This detailed documentation not only facilitates continuous improvement but also ensures compliance with regulatory requirements.

Ensure Reproducibility and Version Control

After conducting security and safety tests, it’s critical to focus on test reproducibility and version control. These practices ensure that any improvements made to AI models can be consistently verified. Unlike traditional software, AI models often produce non-deterministic results - meaning the same input might not always yield the same output. This variability can stem from factors like model sampling, changes in context, or differences in tool availability. To manage these complexities, standardized tracking and version control are essential for maintaining consistency across testing iterations.

"Model versioning is not optional for production ML. It is the foundation that makes everything else possible: reproducibility, auditability, rollbacks, and continuous improvement." - OneUptime

Track Testing Artifacts

Comprehensive documentation is the cornerstone of reproducible testing. This includes logging hardware specs (like CPU/GPU details and CUDA support), development environments (such as operating systems and library versions), and model architecture details (e.g., layer sizes, initialization weights, and hyperparameters). It’s also important to document random seeds, Git commit hashes, training durations, and hardware configurations for every test run.

For datasets, versioning is key. Record raw data hashes, preprocessing scripts, feature pipelines, and train/test splits. Tools like MLflow's LoggedModel simplify this process by acting as a centralized hub for storing code references, configurations, dependencies, and performance data in a single, versioned entity. Because model binaries can be massive, store them in specialized artifact repositories (like DVC or S3), using integrity checks like SHA256 hashes, rather than in Git repositories.

In Generative AI (GenAI) applications, even minor changes to prompts can dramatically affect output quality and tone. To address this, prompts should be versioned alongside the model. Establishing a "Golden Test Set" of 20–30 prompts that reflect key use cases can serve as a baseline for evaluating different model versions. For example, in 2025, an Enterprise AI Platform managing thousands of models adopted a centralized registry and GitOps workflow. By automating versioning and testing, they cut versioning errors by 90% and deployment time by 75%. Such rigorous documentation ensures reliable rollbacks and supports continuous improvement.

Once all artifacts are logged and properly versioned, comparing performance across versions becomes much easier.

Use Comparison Dashboards

To track performance across versions, systematic comparison of metrics is essential. Monitor quality indicators like accuracy, precision, and recall alongside latency measurements to detect regressions before models reach production. Advanced tools, such as GPT-4’s evaluation capabilities, align closely with human assessments, reducing the reliance on costly manual validation.

A structured workflow - spanning Development, Staging, Production, and Archived phases - can help teams manage which version is active and enable quick rollbacks if needed. For instance, in January 2025, GitHub’s engineering team used a custom platform built on GitHub Actions to evaluate models like Claude 3.5 Sonnet and Gemini 1.5 Pro. They conducted over 4,000 offline tests and 1,000 technical questions on candidate models. By routing API calls through a proxy server, they iterated on new versions without modifying product code, ensuring all performance and safety metrics were validated before deployment.

Standardize Testing Environments

Maintaining consistent testing environments is critical for validating reproducibility. Variations in local setups can introduce discrepancies that compromise reliability. To avoid this, containerization tools like Docker can standardize environments across local and distributed testing setups. Additionally, capturing the exact Python version and package list (using tools like pkg_resources) ensures consistency.

Repeating evaluations multiple times helps account for variability in results. Align data sizes with processor memory limits and ensure GPU frameworks like CUDA are consistent across testing nodes to prevent performance differences. Teams that implement effective version control in machine learning report 40–70% faster deployment cycles and three times fewer rollback incidents.

Establish Post-Deployment Monitoring

Once test cases are defined and version control is in place, the next step is post-deployment monitoring. This ensures your model remains effective over time. While initial testing is crucial, it’s not enough. In production, models face ever-changing real-world data. As this data shifts, performance can degrade. Without monitoring, these issues might go unnoticed, leading to "silent failures" - situations where a system runs but produces increasingly inaccurate results.

"Model monitoring is the backbone of modern MLOps, enabling organizations to maintain healthy models in real-world workflows." - WitnessAI

To keep your model aligned with user needs, focus on three key practices: tracking data pattern changes (drift), auditing production logs for anomalies, and transforming user feedback into actionable test cases. Together, these create a feedback loop that maintains accuracy and relevance.

Monitor for Model Drift

Model drift happens when production data no longer matches the data the model was trained on. Drift can take three forms: data drift (input data distributions change, like a shift in user demographics), concept drift (the relationship between inputs and outputs evolves, such as due to market changes), and prediction drift (the model’s output distribution shifts over time).

To identify drift, use statistical methods like the Kolmogorov-Smirnov (KS) test for tabular data or Wasserstein distance for multi-dimensional embeddings. The Population Stability Index (PSI) is another tool for measuring distribution shifts. PSI values below 0.1 suggest no significant change, values between 0.1 and 0.2 indicate moderate changes, and anything above 0.2 calls for immediate action. For the KS test, a p-value less than 0.05 typically signals significant drift. Google Cloud's Vertex AI suggests starting with a 0.3 threshold for most features.

"Data drift occurs when production data diverges from the model's original training data." - Bob Laurent, Head of Product Marketing, Domino Data Lab

When drift exceeds thresholds, consider automated retraining or controlled rollouts like shadow or canary deployments to validate updates. For generative AI, track input prompt embedding shifts instead of analyzing raw text to measure drift effectively.

Audit Production Logs

Detailed logging is essential for understanding how models behave in production. Logs should capture prompts, model responses (both pre- and post-processing), intermediate steps, tool or function calls, and token usage. Use unique trace IDs to link related actions across complex workflows or multi-turn conversations.

To analyze logs at scale, automated tools like LLM-as-Judge can use a separate, more advanced model to evaluate outputs based on relevance and coherence. Rule-based systems can help detect issues like personally identifiable information (PII) or banned terms, while classifiers can flag toxicity or hallucinations. Operational metrics, such as latency spikes or rising costs per prediction, can also signal potential issues.

"LLM observability is not optional for production AI. Models that look fine in evaluation degrade in production." - Swept AI

Before deployment, establish a clear logging schema. For AI agents, monitor metrics like citation coverage (the percentage of facts supported by sources) and refusal rate (how often the model declines to answer queries). When labels aren’t available, use prediction drift - significant changes in output distribution - as a stand-in for model degradation.

These insights are invaluable for refining and improving your model over time.

Add Feedback into Iterative Testing

Failures in production can serve as new test cases. User interactions - like ratings, retries, or session drop-offs - offer critical clues for fine-tuning.

When rolling out updates, use strategies like shadow testing or canary deployments to validate changes before a full release. In A/B tests, routing about 5% of traffic to the new version allows you to compare performance without significant risk.

To streamline this process, integrate evaluation directly into CI/CD pipelines. This ensures every prompt or model update triggers automated testing against ground truth datasets. Keep a versioned log of all models and have clear rollback plans in case retrained models underperform. This continuous cycle of oversight ensures your models remain reliable and effective throughout their lifecycle.

Conclusion

Testing AI models isn't a one-and-done task - it’s a continuous process that plays a critical role in separating successful AI deployments from the majority of projects that struggle to deliver results. The practices outlined here provide a structured approach: setting clear goals, creating strong test cases, validating technical performance, defending against adversarial risks, managing version control, and monitoring performance after deployment. Together, these steps ensure AI systems operate with accuracy, safety, and reliability.

This focus on rigorous testing becomes even more essential when considering the rising risks. AI-related incidents surged by 56.4% in 2024 alone, highlighting the need for thorough evaluation as both a strategic priority and an ethical responsibility. As Michael Giacometti, VP of AI & QE Transformation at TestingXperts, aptly states:

"You not just own the code, you also own the outcomes".

This underscores the importance of testing for accuracy, fairness, safety, and reliability throughout every stage of an AI system's lifecycle.

"The difference between AI systems that thrive in production and those that fail isn't the sophistication of their models - it's the rigor of their evaluation frameworks." - orchestrator.dev

FAQs

What metrics should I choose for my AI use case?

When it comes to evaluating your AI model, picking the right metrics is key. The choice depends on the type of task your model is tackling and the specific goals you have in mind.

For classification tasks, you might want to look at metrics like:

- Accuracy: Measures the percentage of correct predictions out of all predictions.

- Precision: Focuses on how many of the positive predictions were actually correct.

- Recall: Also known as sensitivity, it shows how well the model identifies all actual positives.

- F1 Score: A harmonic mean of precision and recall, useful when you need balance.

- ROC AUC: Evaluates the trade-off between true positive and false positive rates, giving a broader view of performance.

For regression models, consider metrics such as:

- Mean Absolute Error (MAE): Captures the average magnitude of errors in predictions, without considering their direction.

- Root Mean Squared Error (RMSE): Similar to MAE but gives more weight to larger errors, making it useful for identifying significant deviations.

- R-squared: Indicates how well the model explains the variability of the target variable.

The key is to align your metric selection with your specific goals and the nature of your data. For example, if false negatives are more costly than false positives, prioritize recall. On the other hand, if you’re aiming for overall prediction accuracy, metrics like RMSE or accuracy might be more suitable.

How do I build a “golden dataset” for AI testing?

Building a “golden dataset” is about creating a dependable benchmark to evaluate how well an AI model performs. Here’s what it takes:

- Define evaluation criteria: Clearly outline what qualifies as correct, safe, and reliable responses for your model.

- Curate diverse data: Gather examples that reflect real-world situations, typical use cases, and even rare, edge cases to ensure thorough testing.

- Verify and update labels: Use controlled processes to double-check labels for accuracy and make updates when needed.

- Version and govern: Keep the dataset consistent and track changes over time to ensure reliable comparisons.

This process ensures your dataset remains a solid foundation for assessing AI performance.

What should I monitor after deploying an AI model?

After launching an AI model, it's crucial to keep a close eye on several factors: reliability, security, error handling, system uptime, performance metrics, and user experience. Tools like observability platforms, dashboards, and alert systems can help you track these aspects, ensuring the model operates smoothly and delivers on its intended goals.