How AI Predicts Student Outcomes Using Data

AI is changing how schools and colleges identify students who might struggle. Instead of waiting for grades or attendance issues, AI uses data to predict problems early, giving educators time to help. Here's how it works:

- What AI Analyzes: Academic records (GPA, test scores), online behavior (login frequency, assignment submissions), and personal factors (financial aid, first-generation status).

- Accuracy: Models achieve up to 99% accuracy in predicting student risks like dropouts or course failures.

- Benefits: Early warnings allow for targeted support, like tutoring or financial advice, improving retention and performance.

- Tools Used: AI models like decision trees, random forests, and neural networks analyze patterns and provide actionable insights.

AI systems also address broader challenges, such as identifying systemic inequalities, while privacy safeguards like encryption and local data storage protect sensitive information. Schools using these tools have seen better outcomes, including higher retention rates and reduced dropout risks.

Using AI for Predictive Analytics for Student Success (Unit 4.2: Beginners guide to AI in Education)

How AI Predicts Student Outcomes

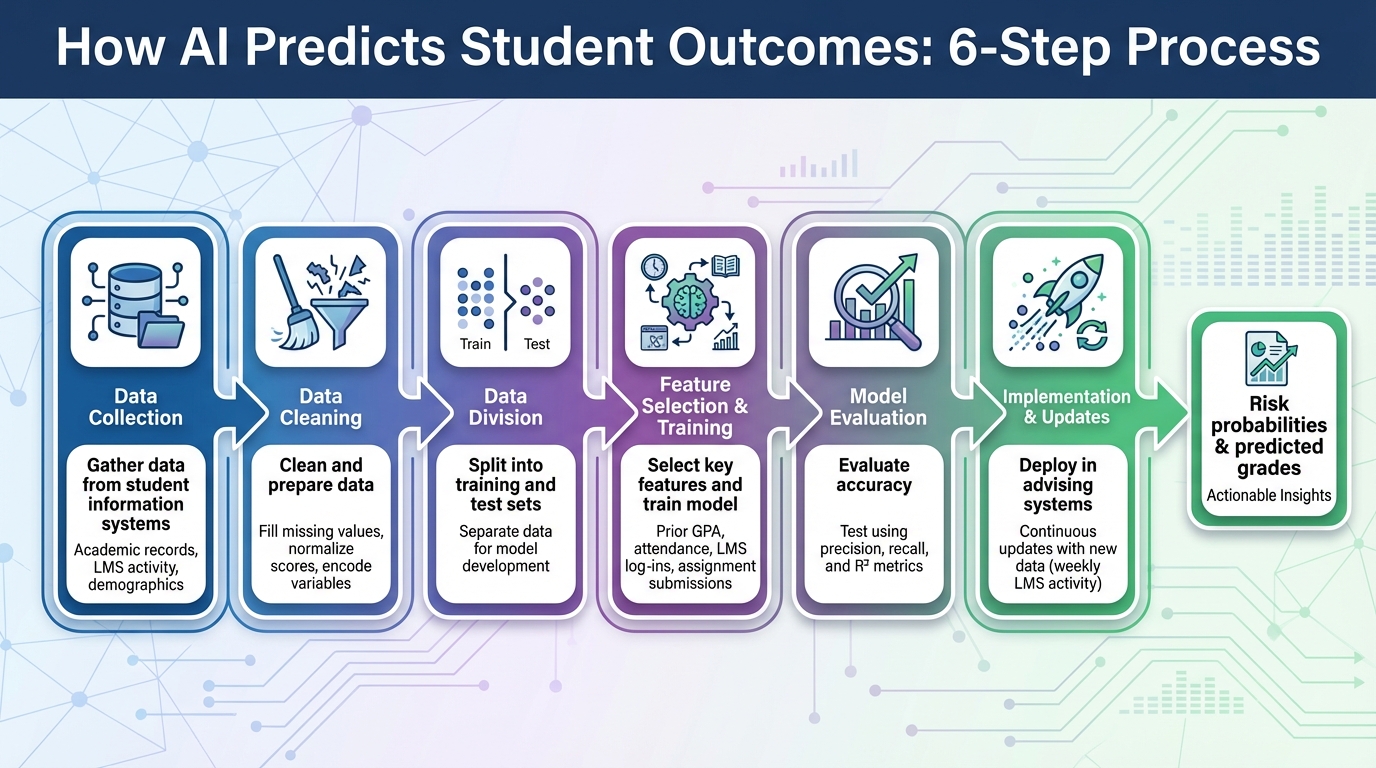

How AI Predicts Student Outcomes: 6-Step Process

AI prediction systems rely on a structured process to analyze and interpret student data. They start by gathering information from student information systems and cleaning it - this includes filling in missing values, normalizing scores, and encoding variables. The data is then divided into training and test sets. Engineers focus on specific features, such as prior GPA, attendance, LMS (Learning Management System) log-ins, and assignment submissions, to train the model. Once trained, the model’s accuracy is evaluated using metrics like precision, recall, and R². After passing these evaluations, the model is implemented in advising systems, where it continuously updates predictions based on new data, such as weekly LMS activity, to provide insights like risk probabilities or predicted grades.

Data Sources AI Uses for Predictions

AI systems pull from several key data sources to make predictions about student outcomes:

- Academic performance data: Metrics like prior GPA, quiz and exam scores, assignment grades, and overall course performance are often the strongest indicators of final grades and program completion.

- Engagement and behavioral data: Information from LMS platforms - such as log-in frequency, time spent on tasks, participation in discussion forums, clickstream data, and assignment submission timing - helps models detect early signs of disengagement. In K–12 settings, attendance records are particularly valuable, as chronic absenteeism is closely linked to lower academic performance.

- Demographic and socioeconomic variables: Factors like first-generation college status, eligibility for financial aid, and neighborhood socioeconomic conditions help explain performance disparities. However, these variables must be handled carefully to avoid perpetuating inequities. Additionally, institutional and contextual data - such as student–teacher ratios, school type, and access to technology - play a role. For example, a study found that just five variables (socioeconomic level, institution type, student–teacher ratio, access to technology, and prior GPA) accounted for 72% of performance variability across 50,000 students.

By combining these diverse data points, machine learning models can identify patterns and predict outcomes with impressive accuracy.

Machine Learning Models Used in Education

Different machine learning models are applied in educational settings, each with its strengths:

- Decision trees: These models use straightforward rules (e.g., "GPA < 2.0 and attendance < 80% = high dropout risk") to classify students into categories like pass/fail or assign probabilities. Their tree-like structure makes them easy to interpret.

- Random forests: By training multiple decision trees on random subsets of data and averaging their predictions, random forests offer improved accuracy and reliability over single-tree models. They are widely used for tasks like predicting course failure and student retention.

- Gradient boosting models: Models like XGBoost refine predictions by correcting errors from previous iterations, achieving high accuracy. For instance, one study reported an R² of 0.91 and a 15% reduction in mean squared error using this approach. Hybrid models that combine techniques like decision trees, random forests, support vector machines, and neural networks have reached accuracy rates as high as 98.8% in identifying at-risk students across various datasets.

- Artificial neural networks: These models excel at capturing complex, non-linear relationships in data, such as sequences of assignment scores or detailed LMS clickstream logs. They are particularly effective when large, rich datasets are available. Neural networks are often paired with explainable AI tools like SHAP, which help clarify why a specific student is flagged as at risk, addressing concerns about the "black box" nature of these models.

These machine learning techniques, combined with robust data inputs, provide a powerful framework for predicting student outcomes and supporting educational decision-making.

Factors That Influence AI Predictions

AI models rely on a variety of factors to predict student outcomes, analyzing both individual and systemic data points. These variables not only shape predictions for individual students but also enhance the overall accuracy of AI models used in education. By understanding which factors carry the most influence, educators and administrators can better interpret these predictions and design targeted interventions.

Socioeconomic and School-Related Factors

External circumstances play a significant role in shaping AI predictions. Factors like family income, parental education, neighborhood conditions, and access to learning resources impact a student's learning environment and stability.

School-level variables are equally critical. Teacher-to-student ratios, school funding, infrastructure quality, and access to technology all contribute to how AI systems assess academic risk. A study examining data from 50,000 students found that just five factors - socioeconomic status, type of institution, student-to-teacher ratio, access to technology, and prior GPA - accounted for 72% of the variation in academic performance. The study also highlighted that improving teacher training and technology resources could increase academic success by 18% and lower dropout rates by 12%.

These institutional factors enable AI systems to differentiate between students facing personal challenges and those affected by resource limitations. For example, lower teacher-to-student ratios are often linked to better individual support and improved outcomes, which AI models recognize as positive indicators. While these external factors are crucial, real-time data on student engagement provides even more immediate insights into academic progress.

Student Engagement and Performance Data

Student engagement is often the most reliable predictor of academic outcomes. Data like attendance records, participation in class activities, assignment submission timelines, and interactions with online learning tools - combined with metrics such as quiz scores, exams, assignments, and cumulative GPA - offer a clear picture of both knowledge acquisition and motivation.

Research using explainable AI has shown that quiz scores, midterm exams, assignments, and class activities are the most influential factors in predicting final grades. This information allows educators to identify areas where students need additional support early on. Advanced AI models also monitor engagement trends over time, adjusting risk assessments as attendance, participation, or submission patterns change throughout the semester. This dynamic tracking ensures that predictions remain relevant as students' circumstances evolve.

sbb-itb-903b5f2

AI Applications in Education

AI-powered prediction tools are making a tangible impact in education by enhancing student retention strategies and creating personalized learning experiences.

Case Study: Improving Student Retention

In 2021, the University of Oregon implemented an AI model that analyzed a mix of 80 institutional variables (such as student ID swipes, academic records, and financial need) and 33 public variables, spanning 12 years of data (2010–2021). This model was designed to predict first-year retention rates with greater accuracy than traditional methods.

What made this system stand out was its ability to pinpoint students most at risk of not returning for their second term. By identifying these students early, advisors could intervene before minor challenges turned into major roadblocks. Unlike older approaches that focused on students with low predicted GPAs, this AI-driven method allowed the University of Oregon's UESS team to shift their attention to students who were more likely to drop out entirely. This proactive approach helped direct resources where they were needed most, offering timely support to vulnerable students and improving overall retention rates.

Personalized Learning Pathways

Beyond retention, AI is transforming the way students learn by delivering personalized education tailored to their unique needs. These systems use data from quizzes, assignments, and engagement metrics to help educators identify specific areas where students are struggling.

One key advantage of these systems is the use of explainable AI, which provides detailed insights into learning gaps. This allows teachers to move beyond guesswork and offer targeted support that directly addresses the root of the problem. As schools and universities integrate more data - such as written responses and behavioral patterns - AI tools become even more adept at understanding individual challenges. This paves the way for customized learning journeys, replacing the outdated one-size-fits-all approach with strategies that adapt to each student's circumstances.

Challenges and Ethical Concerns

AI prediction tools have shown promise in improving educational outcomes, but they also bring ethical dilemmas to the forefront. Two major concerns stand out: algorithmic bias and data privacy risks. These issues become especially critical when applying AI systems in real-world educational settings. Let’s take a closer look at how these challenges can be addressed.

Reducing Bias in AI Models

AI models often rely on historical data, which can unintentionally reinforce existing inequalities in education. A Brookings analysis of a college-completion prediction model revealed troubling racial disparities: Black students who eventually graduated were inaccurately predicted to fail 20% of the time, Hispanic students 21%, compared to 12% for white students and only 6% for Asian students. Such false predictions can have serious consequences, from lowering expectations to limiting opportunities and access to support.

However, there are ways to counteract these biases. For example, researchers applied fairness-aware techniques to the same model, reducing the false negative rate for Hispanic students who graduated from 21% to around 2%. Additionally, institutions can conduct audits to evaluate model performance across different demographic groups. Allowing human advisors to review and override AI-generated risk scores ensures that predictions are treated as guidance rather than final decisions. Importantly, pairing these predictions with supportive interventions - like academic resources or counseling - can help mitigate potential harm.

While addressing bias is crucial, protecting student data is equally important to build trust in AI systems.

Protecting Student Data Privacy

Bias might question fairness, but privacy concerns add another layer of complexity to AI deployment in education. These systems often rely on sensitive data, including academic records, behavioral logs, and demographic or financial aid information. This raises significant risks, such as unauthorized access, misuse of data, or long-term profiling. Regulations like FERPA (Family Educational Rights and Privacy Act) in the United States provide safeguards by limiting the disclosure of personally identifiable information without consent.

To minimize risks, institutions should focus on collecting only the data that is absolutely necessary, encrypting both data storage and transmission, and implementing strict access controls. Data minimization - avoiding the collection of highly sensitive information unless absolutely justified - can further reduce vulnerabilities. Some organizations are also experimenting with privacy-preserving systems that avoid centralized data storage.

For instance, NanoGPT employs a local storage approach, keeping data on the user’s device rather than on remote servers. This method reduces the risk of large-scale breaches and limits third-party access to sensitive information. In an educational setting, a similar approach could involve running predictive models on secure, institution-managed servers, ensuring that student identifiers never leave the school’s protected environment. NanoGPT’s policy of not using user data for AI training offers another layer of reassurance. By adopting these privacy-focused designs, educational institutions can harness AI’s capabilities while maintaining robust privacy protections.

Tackling these challenges is essential to ensure that predictive models genuinely support educational success without creating new barriers.

Conclusion: AI's Future in Education

AI is reshaping education by predicting student outcomes and enabling timely interventions. By analyzing a wide range of data - like academic performance, attendance, and socioeconomic factors - machine learning models can pinpoint at-risk students with remarkable accuracy. For instance, an XGBoost model applied to 50,000 student records achieved an impressive R² of 0.91, while hybrid models have pushed accuracy levels close to 99%. These numbers highlight the power of AI in driving actionable, data-informed strategies.

Studies reveal that leveraging AI insights to enhance teacher training and broaden access to technology can improve student performance by 18% and lower dropout rates by 12%. Tools like SHAP and LIME provide clarity by identifying key factors behind predictions, empowering educators to make targeted interventions. This transparency is crucial - it ensures AI is seen as a tool to support, not replace, human judgment.

However, with great potential comes responsibility. Ethical considerations, such as fairness-aware methods and privacy protections, must guide AI's integration into education. Regular audits are essential to prevent biases that could amplify racial or socioeconomic disparities. Institutions should adopt strategies to minimize prediction errors across diverse student populations. Privacy is equally critical. Solutions like local data storage, strict access controls, and adherence to FERPA regulations safeguard sensitive information. Platforms such as NanoGPT set a strong example by storing data directly on users' devices and ensuring AI models do not train on user data - an approach that prioritizes privacy.

The road ahead for AI in education requires balancing innovation with responsibility. Institutions need high-quality data, robust safeguards, and actionable policies that promote equity and transparency. Educators must be equipped to interpret AI-driven insights, and policymakers must establish clear guidelines to ensure ethical use. When implemented thoughtfully, AI doesn’t just predict outcomes - it transforms education by personalizing learning, optimizing resources, and ensuring every student gets the support they need to thrive.

FAQs

How does AI protect student privacy when analyzing data?

NanoGPT takes user privacy seriously by keeping all data stored directly on your device. This approach ensures that your information never gets uploaded to external servers. Plus, NanoGPT doesn’t use your data to train its AI models, offering an extra layer of security and peace of mind.

How does AI ensure fairness and avoid bias when predicting student outcomes?

AI systems rely on several methods to reduce bias and ensure fairness when predicting student outcomes. These methods include using diverse and representative datasets during model training, employing algorithms specifically designed to identify and address biases, and regularly reviewing predictions to catch and address any unintended disparities.

Moreover, collaboration between developers and educators plays a crucial role. This partnership helps ensure that AI systems adhere to ethical standards and are consistently updated to meet changing expectations. Transparency in processes and a strong focus on accountability are essential for building trust and maintaining fairness in these predictions.

How can educators use AI to better understand and support their students?

Educators now have access to AI tools that can transform how they understand and support their students. These tools can analyze data to uncover insights about student performance, learning habits, and areas where extra help might be needed. With this information, teachers can design learning experiences that are more tailored to each student’s needs and provide focused support to boost their success.

Take platforms like NanoGPT, for example. They enable educators to tap into advanced AI models that can spot trends, create customized content, and highlight growth opportunities. This kind of support allows teachers to make well-informed decisions, ultimately creating a more engaging and productive learning environment.