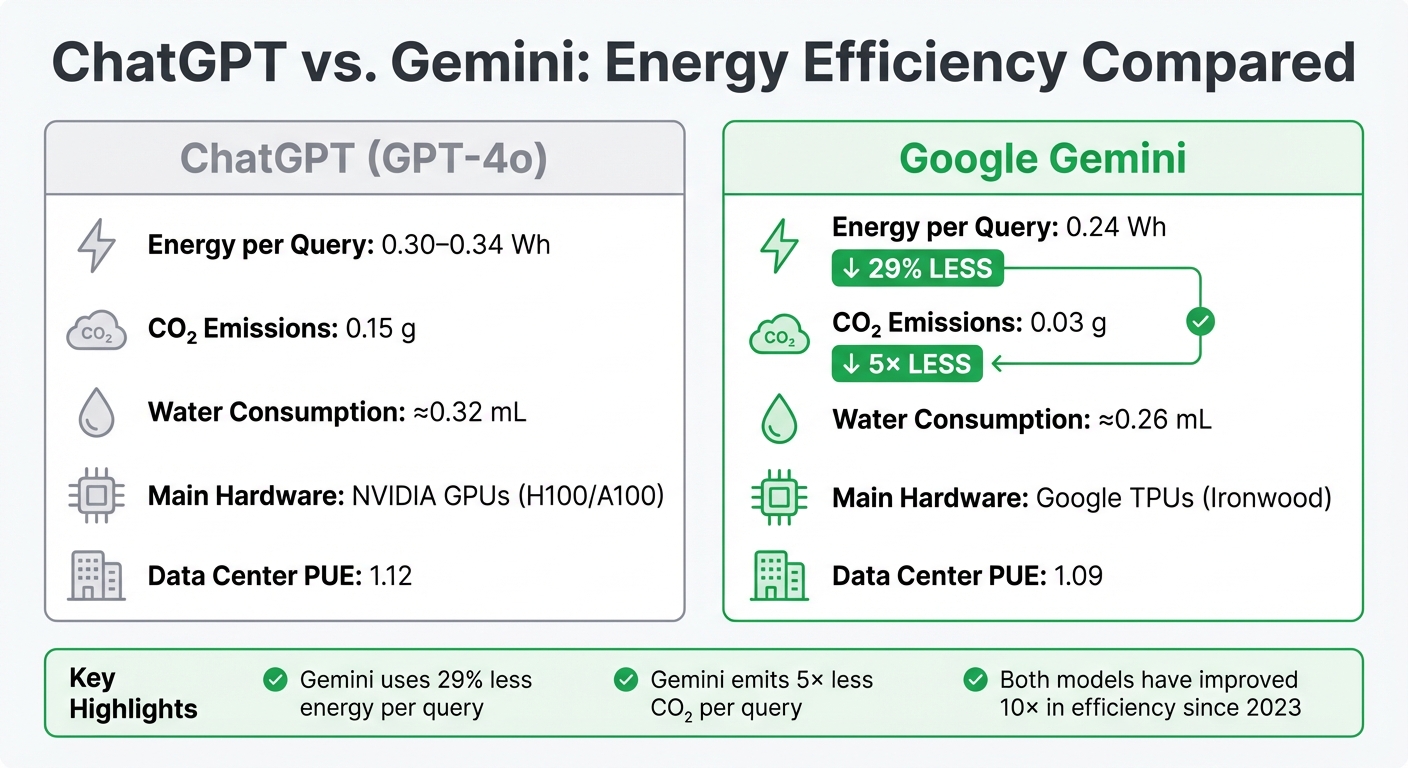

ChatGPT vs. Gemini: Energy Efficiency Compared

Looking for the most energy-efficient AI? Here’s the quick answer: Gemini consumes less energy and produces fewer carbon emissions per query compared to ChatGPT. While both models have improved efficiency over time, Gemini leads with 0.24 Wh per query versus ChatGPT's 0.30–0.34 Wh, and emits 0.03 g of CO₂ compared to ChatGPT's 0.15 g.

Key Findings:

- Training Energy: Both models require significant power, but Gemini benefits from Google's optimized infrastructure and hardware.

- Inference Energy: Gemini uses 29% less energy per query than ChatGPT.

- Hardware: ChatGPT relies on NVIDIA GPUs, while Gemini uses Google's custom TPUs, which are more efficient.

- Carbon Emissions: Gemini emits 5× less CO₂ per query.

Quick Comparison:

| Metric | ChatGPT (GPT-4o) | Google Gemini |

|---|---|---|

| Energy per Query | 0.30–0.34 Wh | 0.24 Wh |

| CO₂ Emissions | 0.15 g | 0.03 g |

| Water Consumption | ≈0.32 mL | ≈0.26 mL |

| Main Hardware | NVIDIA GPUs | Google TPUs |

| Data Center PUE | 1.12 | 1.09 |

Gemini’s efficiency stems from custom hardware, smarter architecture, and advanced optimization techniques. ChatGPT has made strides in reducing energy use but still lags behind Gemini for standard tasks. For businesses and users, choosing Gemini could mean lower costs and a smaller environmental footprint.

ChatGPT vs Gemini Energy Efficiency Comparison: Per-Query Metrics

ChatGPT's Energy Consumption

Training Phase Metrics

Training a model like GPT-4o requires a massive amount of electricity. The process consumes between 20 and 25 megawatts (MW) of power over a three-month training period. To put that into perspective, this is roughly equivalent to the energy needed to power 20,000 American homes during the same timeframe.

But the energy cost doesn’t stop there. The development phase - covering experimentation and iterative testing - adds about 50% more to the total energy usage. During training, power usage varies widely, ranging from 15% to 85% of hardware capacity, which makes energy management more complex.

Interestingly, earlier versions of the model, such as GPT-3, were even more power-hungry.

Inference Energy Usage

Although training is energy-intensive, the energy efficiency of inference - what happens when users interact with the model - has improved significantly. For example, processing a single query with GPT-4o now uses about 0.3 Wh of electricity. That’s a 10× improvement compared to the 3 Wh per query estimated for older models as recently as early 2023.

Several advancements have contributed to this efficiency:

- Hardware upgrades: Transitioning from NVIDIA A100 GPUs to H100 GPUs has boosted performance per watt.

- Smarter architecture: GPT-4o uses a Mixture-of-Experts (MoE) design, which activates only about 100 billion parameters out of the estimated 200–400 billion total, saving energy.

- Precision optimization: Moving from 16-bit (FP16) to 8-bit (FP8) precision reduces energy use per token by about 30%.

However, energy consumption increases with input length. For example, processing 100,000 tokens can consume nearly 40 Wh, which is more than 130× the energy of a standard query. Given that ChatGPT processes around 1 billion messages daily from its 300 million users, the total power required for inference is estimated to be about 12.5 MW.

sbb-itb-903b5f2

Gemini's Energy Consumption

Training Phase Metrics

Google employs a "full-stack" approach to optimize energy use, focusing on everything from model architecture to custom hardware. A key element of this strategy is the Transformer architecture, which offers a 10–100× efficiency boost compared to older designs. Gemini also incorporates a Mixture-of-Experts (MoE) system, activating only a small subset of parameters during training. This approach reduces computation and data transfer needs by 10–100×.

Another technique, Accurate Quantized Training (AQT), allows models to operate with fewer bits of precision, significantly lowering compute and energy demands while maintaining output quality. Although Google hasn’t shared exact energy figures for training Gemini, industry data provides some context. For example, training Llama 3.1 405B required around 27.51 gigawatt-hours (GWh) of energy and resulted in 11,390 tons of CO₂e emissions.

Google's data centers also contribute to energy efficiency. They operate with an average Power Usage Effectiveness (PUE) of 1.09, meaning only 9% of energy is used for non-computing tasks like cooling - far better than the industry average. Additionally, Google's latest TPU, called "Ironwood", is 30× more energy-efficient than its first-generation TPU. These innovations in training efficiency lay the groundwork for energy savings during the inference phase.

Inference Energy Usage

When it comes to inference, Gemini stands out for its low energy requirements. A single Gemini prompt consumes 0.24 Wh, which is 29% less than ChatGPT's 0.34 Wh per query. To put this into perspective, the energy used by one Gemini prompt is equivalent to watching television for 9 seconds.

Between May 2024 and May 2025, Google reduced Gemini's energy consumption per prompt by an impressive 33×, all while improving response quality. Carbon emissions saw an even steeper reduction - dropping 44× to just 0.03 grams of CO₂e per prompt. Addressing public concerns, Google's Chief Scientist Jeff Dean highlighted the model's minimal environmental impact:

"People shouldn't have major concerns about the energy usage or the water usage of Gemini models... it's actually equivalent to things you do without even thinking about it on a daily basis."

Custom TPUs are the primary energy consumers during inference, accounting for 58% of the energy used per query. The remaining energy is distributed among host CPUs/memory (25%), idle backups (10%), and data center overhead (8%). However, energy use does scale with prompt complexity. For instance, a prompt with 50,000 input tokens requires roughly 3.25× more energy than one with 300 tokens. This detailed breakdown highlights Gemini's focus on maintaining energy efficiency, even as it handles more demanding tasks.

Energy Efficiency Comparison

Side-by-Side Comparison Table

When it comes to energy use, Gemini outperforms ChatGPT with a consumption of just 0.24 Wh per query, compared to ChatGPT's 0.30–0.34 Wh. That’s about a 29% reduction. Carbon emissions show an even starker contrast: Gemini emits only 0.03 grams of CO₂ per query, while ChatGPT produces around 0.15 grams. In other words, Gemini is roughly five times cleaner in terms of emissions.

| Metric | ChatGPT (GPT-4o) | Google Gemini |

|---|---|---|

| Energy Consumption | 0.30–0.34 Wh | 0.24 Wh |

| CO₂ Emissions | 0.15 g | 0.03 g |

| Water Consumption | ≈0.32 mL | ≈0.26 mL |

| Primary Hardware | NVIDIA H100/A100 GPUs | Google TPU (Ironwood) |

| Data Center PUE | 1.12 (Azure) | 1.09 (Google) |

These differences highlight the importance of the technology and strategies driving each model's energy efficiency.

What Affects Energy Efficiency

A variety of factors influence the energy efficiency of these AI models. Let’s break them down:

- Hardware and Infrastructure: Gemini runs on Google's custom TPUs (Ironwood), which are specifically designed for AI tasks. ChatGPT, on the other hand, operates using NVIDIA's H100 and A100 GPUs.

- Model Architecture: Both models use Mixture of Experts (MoE) techniques. This approach activates only the necessary parameters for each query, which helps scale computation efficiently.

- Optimization Techniques: Gemini incorporates speculative decoding and model distillation to make its inference process more streamlined. Meanwhile, ChatGPT optimizes performance through continuous batching and advanced GPU utilization.

- Prompt Characteristics: The energy required to process a query can vary significantly depending on the input and output. For example, a lengthy input of 100,000 tokens in ChatGPT can demand up to 40 Wh per query. Similarly, generating 900 output tokens consumes approximately 11 times more energy than producing just 100 tokens. Running multiple prompts in batches can help lower the energy cost per query.

These insights reveal how hardware, software, and even user behavior all play a role in determining the energy efficiency of AI models.

Talking to ChatGPT drains energy. These other things are worse.

Conclusion

When comparing energy efficiency, Gemini stands out as the more efficient option for standard queries. It uses approximately 0.24 Wh per prompt, compared to ChatGPT's 0.34 Wh, and emits significantly less carbon - just 0.03 g versus 0.15 g per query. This advantage is largely due to Google's custom TPU hardware and highly efficient data centers, which operate with a Power Usage Effectiveness (PUE) of 1.09.

Both Gemini and ChatGPT rely on advanced hardware and architectural improvements to enhance efficiency. However, Gemini's integrated optimizations across its entire system give it a clear lead for everyday tasks. That said, energy consumption can spike with complex prompts requiring extensive reasoning, often diminishing these baseline efficiency gains.

Key recommendations: For simpler tasks, opt for lightweight versions like "mini" or "flash" models. Grouping queries together can also help reduce energy use. Reserve more resource-intensive reasoning models for situations where deep logic is absolutely necessary. For business leaders, the infrastructure behind a provider - like hardware efficiency and commitments to carbon-free energy - can play a pivotal role in scaling operations sustainably.

Even as per-query efficiency improves, the growing adoption of AI presents a bigger challenge. With billions of daily interactions projected, total energy demand is expected to rise sharply. The real test isn't just about making individual models more efficient but also managing the rapid increase in usage that these advancements enable.

FAQs

How does Gemini achieve better energy efficiency compared to ChatGPT?

Gemini stands out for its focus on energy efficiency, using about the same amount of energy per query as a standard web search. This is made possible by Google's emphasis on fine-tuning software performance and incorporating clean energy solutions in its operations.

On the other hand, ChatGPT generally consumes more energy per query. For users who care about reducing energy use and minimizing their environmental footprint, Gemini offers a more eco-friendly alternative.

How do hardware differences affect the energy efficiency of ChatGPT and Gemini?

The hardware behind AI models plays a huge role in their energy consumption. Take Gemini, for example - it uses about 0.24 Wh per prompt, which is a stark contrast to ChatGPT's estimated 3 Wh per prompt. That’s a difference of up to 137 times in some cases. Why such a gap? It boils down to Gemini’s more efficient hardware and infrastructure, which are specifically designed to minimize energy use.

Gemini also has the advantage of running on Google's advanced systems. These systems are built with energy-efficient hardware and integrate renewable energy sources, further cutting down on environmental impact. On the other hand, ChatGPT’s higher energy usage stems from less optimized hardware and infrastructure. This comparison makes it clear: the way hardware and infrastructure are designed has a direct impact on how energy-efficient AI models can be.

How do carbon emissions influence the choice between ChatGPT and Gemini?

When comparing ChatGPT and Gemini, carbon emissions become a key consideration due to the energy required to power these AI models. For instance, Gemini stands out with an energy usage of approximately 0.24 Wh per query, which translates to a relatively small carbon footprint.

The environmental impact of AI models depends heavily on the energy sources fueling the data centers they operate from. Choosing models that consume less energy or are backed by renewable energy sources can play a role in lowering overall carbon emissions.