Data Minimization Strategies for AI Models

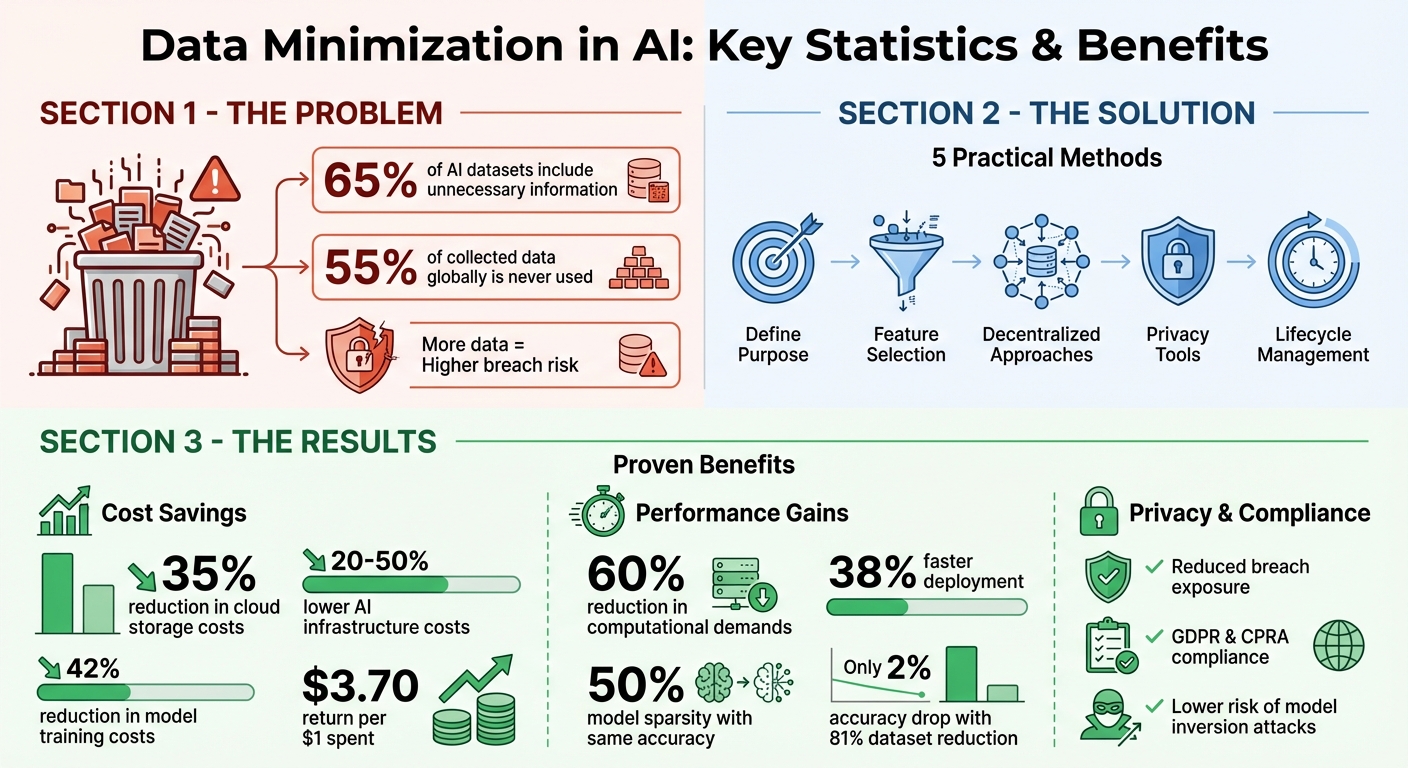

Collect less, achieve more. Data minimization means using only the data you truly need for AI projects. Why? Over 65% of AI datasets include unnecessary information, and 55% of collected data globally is never used. This wastes resources, increases security risks, and violates privacy laws like GDPR and CPRA.

Key Takeaways:

- Privacy and Security: Less data reduces exposure to breaches and attacks like model inversion.

- Legal Compliance: Avoid fines by adhering to rules on collecting only necessary data.

- Efficiency: Smaller datasets lower costs, speed up training, and improve AI performance.

Practical Methods:

- Define Purpose: Only collect data relevant to your AI goals.

- Feature Selection: Use techniques like L1 regularization to remove irrelevant features.

- Decentralized Approaches: Federated learning keeps data local, reducing risks.

- Privacy Tools: Differential privacy and synthetic data protect sensitive information.

- Lifecycle Management: Regularly delete outdated data and enforce storage limits.

By focusing on what matters, you’ll not only protect privacy but also cut costs and improve results.

Data Minimization Benefits: Cost Savings and Performance Statistics for AI Models

Problems with Collecting Too Much Data

Privacy and Security Threats

Gathering excessive data comes with serious risks, particularly when it comes to privacy and security. The more data you store, especially across multiple locations or with third-party vendors, the more vulnerable you become to breaches.

The dangers aren't limited to traditional data theft. Model inversion attacks let attackers piece together sensitive personal information from your training data by analyzing a model's inputs and outputs. For instance, researchers managed to reconstruct face images from a facial recognition system with an impressive 95% accuracy, using nothing more than the names and confidence scores the system produced. Similarly, membership inference attacks can expose whether a specific individual's data was included in the training set - this is particularly concerning when dealing with sensitive groups, like patients with rare medical conditions.

Even the tools you use to develop AI models can be a weak point. Machine learning frameworks are incredibly complex, often containing hundreds of thousands of lines of code and relying on numerous external dependencies. Each dependency is a potential vulnerability. Take NumPy, a widely-used Python library: in January 2019, a security flaw could have allowed attackers to execute malicious code disguised as training data. The more data you feed into these systems, the more you amplify your exposure to such risks.

And it doesn’t stop at technical threats. Over-collection of data also opens the door to significant legal challenges.

Legal Compliance Issues

Regulations like GDPR and the California Privacy Rights Act enforce strict data minimization rules. Collecting more data than necessary for your stated purpose is a direct violation, and the penalties can be severe.

Consider the 2021 case in Sweden, where the Data Protection Authority banned the use of facial recognition for tracking student attendance. The ruling found that collecting biometric data for this purpose violated GDPR's principle of proportionality, which requires that data collection methods be appropriately limited to their intended goals. This highlights a critical point: collecting only what’s strictly necessary isn’t just good practice - it’s the law.

"Broad data minimization principles ('collect no more data than necessary'), a core part of data privacy laws like the EU's GDPR, have been woefully underenforced and given too much interpretive wiggle room." - AI Now Institute

Legal risks don’t end with data collection. Failing to delete outdated training data or implement proper retention policies can lead to ongoing violations of storage limitation rules. Moreover, when a model "overfits" and memorizes specific training examples, it doesn’t just hurt performance - it creates a privacy risk. Such a model could inadvertently reveal personal information through its outputs, leaving you exposed to legal and ethical challenges.

Training Inefficiencies

Beyond privacy and legal concerns, collecting too much data can lead to inefficiencies that undermine your AI projects. Larger datasets increase storage and computational costs while often diluting model accuracy by introducing irrelevant or noisy features.

"Careful data minimization can often improve model performance and reduce computational and storage costs during algorithm development." - Robin Staab et al., ETH Zurich

Using outdated or irrelevant data not only wastes resources but also slows down your entire AI pipeline. Bloated datasets increase latency, reduce throughput, and extend training times. Essentially, you're spending more money and time to process data that contributes little to no value, all while making your models less efficient. This inefficiency can become a costly burden, both financially and operationally.

sbb-itb-903b5f2

Practical Data Minimization Methods

Defining Purpose and Identifying Relevant Features

Start by defining the purpose of your data collection - this is not just a smart approach but also a legal requirement under GDPR Article 51. This regulation emphasizes that personal data must be "adequate, relevant and limited to what is necessary in relation to the purposes for which they are processed". Without a clear purpose, you risk collecting unnecessary data, which could lead to compliance issues.

To avoid this, outline your model's specific task first, and then work backward to identify only the data attributes that directly support that goal. For instance, if you're designing a credit risk model, you might need details like income and payment history, but an exact birth date could often be generalized into an age range. Generalization is just one of three key techniques in vertical data minimization. The other two include suppression (choosing not to collect certain data points) and perturbation (adding noise to data to protect privacy while retaining utility).

Once you've pinpointed the essential data attributes, the next logical step is to eliminate any redundant features systematically.

Feature Selection and Dimensionality Reduction

Feature selection is a crucial step in data minimization. Common techniques include:

- Filter methods: Use statistical metrics to evaluate features.

- Embedded methods: Integrate feature selection directly into model training, such as with L1 regularization.

- Wrapper methods: Iteratively test feature subsets, though this can be computationally intensive.

For example, a study using the Bank Marketing Dataset (11,162 customers) showed that reducing the dataset to 18.75% of its original size caused only a 2% drop in accuracy. Even more aggressive reduction to 6.25% still resulted in just a 5% accuracy decrease. Similarly, the Cloak framework, which uses gradient-based perturbation to identify vital features, achieved an 85.01% reduction in mutual information between input data and model outputs, with only a 1.42% loss in utility.

A simple starting point is to apply a variance threshold to eliminate features with minimal variability. For more advanced methods, L1 regularization (Lasso) during training helps the model zero in on the most predictive features.

Beyond these techniques, decentralized approaches offer another layer of privacy and data minimization.

Federated Learning and Decentralized Storage

Decentralized storage and federated learning take data minimization a step further by reducing the need to centralize data while still achieving effective model training. Federated learning allows data to remain on local devices, sharing only model updates instead of raw data. Major tech companies already use federated learning alongside Secure Aggregation and Trusted Execution Environments (TEEs) to maintain data privacy during training.

Secure Aggregation ensures that the central server receives only combined updates from multiple users, making individual contributions indistinguishable. TEEs add another layer of security by enabling clients to verify server-side code before sending updates. To further protect privacy, differential privacy techniques introduce controlled noise into updates, either locally on devices or during aggregation. This prevents the model from memorizing or exposing unique details about any individual.

These decentralized strategies align well with efforts like NanoGPT's approach, which processes data locally to minimize exposure while maintaining model performance [https://nano-gpt.com].

The Data Minimization Principle in Machine Learning

Privacy-Focused Techniques for AI

Expanding on earlier data minimization strategies, these privacy-focused methods provide additional layers of security for sensitive information. By combining minimization with advanced privacy tools, organizations can safeguard data without compromising the usefulness of their AI models.

Differential Privacy

Differential privacy (DP) offers a mathematical guarantee that the inclusion or exclusion of an individual's data won't noticeably impact the output of an AI model. This approach goes beyond simple methods like removing names or email addresses, which can still leave individuals vulnerable to re-identification when combined with other data sources.

One common way to implement DP in deep learning is through DP-SGD (Differentially Private Stochastic Gradient Descent). This technique modifies the training process by first limiting the influence of individual data points (gradient clipping) and then adding controlled noise - usually Gaussian or Laplacian - to the aggregated gradients before updating the model's weights. The level of privacy is measured using the epsilon (ε) parameter:

- ε ≤ 1: Indicates strong privacy protection.

- ε ≤ 10: Suitable for more complex models but still requires careful consideration.

- ε > 10: Needs additional audits to ensure privacy remains intact.

According to Google Research engineers Natalia Ponomareva and Alex Kurakin:

"Differential Privacy (DP) is one of the most widely accepted technologies that allows reasoning about data anonymization in a formal way. In the context of an ML model, DP can guarantee that each individual user's contribution will not result in a significantly different model".

Training models with DP requires careful adjustments. Practitioners often use larger batch sizes and tweak learning rates to counterbalance the utility loss caused by added noise. A popular strategy involves pre-training models on publicly available data before fine-tuning them on private datasets using DP-SGD.

In addition to DP, synthetic data generation offers another route to protect privacy while maintaining functionality.

Synthetic Data Generation

Synthetic data generation creates artificial datasets that mimic real-world data patterns, avoiding the exposure of actual user information. This method helps organizations comply with regulations like GDPR, HIPAA, and CCPA by enabling the sharing of anonymized data.

For instance, in May 2024, Google DeepMind used differentially private synthetic data to train a safety classifier for on-device models. This ensured the model's accuracy without revealing any information from its original training set. Similarly, the UK National Health Service released synthetic data derived from emergency room records to study patient care trends while protecting individual privacy.

However, synthetic data isn't without challenges. Emiliano De Cristofaro from the University of California, Riverside, highlights a key limitation:

"Protecting privacy inherently means you must 'hide' vulnerable data points like outliers... if one tries to use synthetic data to upsample an under-represented class, train a fraud/anomaly detection model, etc., they will inherently need to choose between either privacy or utility".

Synthetic datasets can also face risks like membership inference attacks or contamination if the generative model was pre-trained on sensitive public records. To reduce these risks, practitioners should de-duplicate pre-training datasets and explore tuning methods like LoRA (Low-Rank Adaptation), which can improve performance by up to 11 percentage points compared to full fine-tuning.

When combined with strong data management practices, synthetic data becomes a valuable piece of privacy-first AI workflows.

Data Lifecycle Management

Managing the data lifecycle effectively means monitoring the relevance of personal information at every stage - development, training, and inference. Organizations should enforce strict storage limits, retaining data only as long as necessary for specific purposes. Automated tools can help trace data across systems, eliminating unnecessary duplicates, while conducting Data Protection Impact Assessments (DPIAs) ensures retention practices are justified.

Classifying data by sensitivity allows for tailored retention policies. For example, highly sensitive data might require shorter storage durations and stricter access controls. Additionally, implementing rate limits on API queries can prevent privacy attacks by restricting excessive probing for private information. Regular audits help maintain control over data accumulation and ensure models perform as intended.

NanoGPT demonstrates these principles by storing data locally on user devices, reducing exposure through decentralized management [https://nano-gpt.com].

Advantages of Data Minimization

Data minimization isn't just about protecting privacy - it's also a smart way to improve resource efficiency and enhance model performance. For privacy-first AI training, it’s a game-changer.

Better Privacy and Security

When organizations collect only the data they truly need, they significantly reduce the chances of a breach. Storing less sensitive information means there's less for attackers to target, which lowers the risk and impact of potential data exposure. This approach also limits vulnerabilities to threats like inversion and inference attacks.

Another benefit? Smaller datasets make it harder for models to memorize specific examples, which further reduces privacy risks.

Lower Costs and Resource Use

Smaller datasets aren’t just safer - they’re cheaper. By adopting data minimization, organizations have reported impressive savings. For instance, companies reduced cloud storage costs by an average of 35% within a year. On top of that, AI infrastructure costs can drop by 20% to 50% without sacrificing model accuracy.

Take Aible’s example from June 2025: they fine-tuned a smaller 8-billion-parameter model for just $4.58, achieving 82% accuracy - comparable to much larger models. This approach slashed overall costs from millions to about $30,000. Similarly, a major European bank cut its data footprint by 60% in May 2025, which led to a 42% reduction in model training costs and 38% faster deployment of new AI features. Advanced AI adopters have also seen a $3.70 return for every $1 spent, with payback periods averaging 1.2 to 1.3 years.

These savings make a strong case for data minimization as a cost-effective strategy.

Better Model Performance

Smaller, more focused datasets don’t just save money - they also make models better. By cutting out unnecessary data, models face less noise during training, which can boost accuracy. For example, using Differentially Private Iterative Magnitude Pruning, BERT models achieved 50% sparsity while maintaining the same performance as their full counterparts. This also reduced memory usage and improved inference speed - essential for real-time applications where every millisecond counts.

"Careful data minimization can often improve model performance and reduce computational and storage costs during algorithm development" - Robin Staab et al., ETH Zurich

In one practical case, reducing solar energy datasets by 40% led to a 60% drop in computational demands. By focusing on the most relevant features, models train faster and deliver more precise predictions.

Data minimization isn’t just a privacy measure - it’s a smarter way to work.

Conclusion

Data minimization stands out as a key strategy in addressing the challenges of AI development. By focusing on collecting only the data that’s absolutely necessary, organizations can reduce the risk of privacy breaches and steer clear of costly fines.

This method enhances privacy by limiting the amount of data exposed during potential breaches, aligns with regulations like GDPR Article 5(c) and the California Privacy Rights Act, and boosts efficiency by cutting out unnecessary data that can lead to overfitting.

"The key is that you only process the personal data you need for your purpose." - Information Commissioner's Office (ICO)

As discussed earlier, targeted data collection is critical for meeting regulatory demands and improving operational workflows. Importantly, this shift transfers the responsibility for privacy protection from individuals to organizations, requiring companies to justify the necessity of every piece of data they gather. With over 65% of AI datasets containing redundant information, there’s a clear opportunity to improve - and plenty of advantages for those who take the lead.

FAQs

How do I decide what data is truly necessary for my model?

Collect only the data you absolutely need to accomplish your task. Start by clearly defining why you're gathering data and evaluate if each piece of information directly contributes to your model's objectives. Strategies like relevance assessment and purpose specification can help you limit data collection to what's necessary. Additionally, techniques like feature reduction or generalization can help you stay aligned with privacy regulations while minimizing unnecessary data exposure.

Will reducing features hurt accuracy or improve it?

Reducing the number of features in a dataset can actually boost accuracy by cutting out irrelevant or redundant information. By narrowing the focus to the most meaningful features, models can work more efficiently and deliver stronger performance. In fact, trimming down features often reduces noise, which can lead to clearer and more accurate results.

When should I use federated learning vs differential privacy?

Federated learning and differential privacy both focus on improving data privacy, but they tackle the issue in distinct ways. Federated learning enables multiple devices to collaboratively train a shared model without the need to transfer their local data. This approach is especially useful when sensitive data must remain stored on individual devices. On the other hand, differential privacy protects individual data points by introducing noise to the data or its outputs, making it harder to trace back to any specific individual. This method works well when sharing aggregated results while maintaining privacy. Depending on the type of data, privacy requirements, and specific use case, these two methods can also be used together for enhanced protection.