Dynamic Load Balancing: Role of Round Robin Algorithms

Dynamic load balancing ensures efficient server traffic distribution by analyzing live metrics like CPU usage and server health. Round Robin algorithms, particularly their variations, play a key role in this process. Here's a quick breakdown:

- Round Robin: Routes traffic sequentially across servers. Simple and fast but ignores server performance differences.

- Weighted Round Robin (WRR): Accounts for server capacity by assigning weights, ensuring stronger servers handle more traffic.

- Dynamic Round Robin (DRR): Adjusts weights in real-time based on server performance but requires more computational resources.

Key Takeaways:

- Use Round Robin for identical servers.

- Choose WRR for mixed-capacity setups.

- Opt for DRR in environments with fluctuating workloads, despite its higher overhead.

Each method has its strengths and weaknesses, making the choice dependent on server setups and traffic patterns. Whether you're managing web servers, cloud hosting, or AI workloads, selecting the appropriate algorithm ensures balanced performance and reliability.

Round Robin Load Balancing Explained

How Round Robin Works

Round Robin functions much like a sequential ticketing system - each incoming request is routed to the next server in line. The load balancer cycles through a predefined list: the first request goes to Server 1, the second to Server 2, and so on, before starting over again. This simple, systematic approach ensures requests are distributed evenly, forming a solid foundation for efficient system management.

With a time complexity of O(1), Round Robin assigns requests quickly without factoring in server-specific conditions. Each decision is independent, ignoring past allocations or real-time server performance. As Miguel Vieira Pinto from Network Encyclopedia describes it:

"It's like the bread and butter of load balancing - a staple in the repertoire of system administrators and network engineers".

Interestingly, more than 350 million websites globally rely on NGINX or NGINX Plus, which uses Round Robin as its default load-balancing method. NGINX Plus even enhances this by automatically retrying failed requests and temporarily blacklisting unresponsive servers for 10 seconds.

Benefits of Round Robin

The simplicity of Round Robin is one of its biggest strengths. It’s easy to configure, requires minimal resources, and can be implemented quickly. Its cyclic nature ensures that each server receives an equal share of requests over time. This predictable pattern also makes troubleshooting traffic flow straightforward. However, basic Round Robin doesn’t account for differences in server capacity - for example, treating a server with 8GB of RAM the same as one with 32GB - unless a weighted variant is used to address these disparities. These characteristics make it a practical choice for dynamic load-balancing across modern infrastructures.

sbb-itb-903b5f2

Round Robin Variants

Weighted Round Robin

Weighted Round Robin (WRR) takes the basic Round Robin approach and fine-tunes it by considering each server's capabilities. Servers are assigned numerical weights based on factors like processing power, memory, or bandwidth. Instead of cycling through servers equally, WRR distributes requests in proportion to these weights. For instance, a server with a weight of 100 would handle twice as many requests as one with a weight of 50.

This method is especially useful in setups where servers vary significantly in capacity. For example, treating a server with 8GB of RAM the same as one with 32GB could lead to the weaker server being overwhelmed. WRR ensures that stronger servers handle more traffic while still allowing less powerful ones to contribute. As Nawaz Dhandala from OneUptime explains:

"Weighted round robin lets you route more traffic to stronger servers while still giving weaker ones a fair share".

Weights are often calculated using hardware specifications - like assigning 1 point for every 4GB of RAM, with extra points for SSD storage. In a setup with weights of 5:3:2, out of 1,000 requests, Server A would handle around 500, Server B 300, and Server C 200. Maurice McMullin from Progress Kemp highlights the simplicity of this approach:

"The weighted version of round robin often gets used when the backend servers are not identical, but needs are still simple".

However, WRR has its limitations. It relies on static weights set by administrators, which don’t adapt to real-time changes in server performance. To address this, a smoother variant interleaves traffic more evenly to avoid sudden overloads.

For dynamic environments, where conditions change frequently, Dynamic Round Robin offers a more flexible solution.

Dynamic Round Robin

Dynamic Round Robin (DRR) takes WRR a step further by incorporating real-time server performance into its traffic distribution. DRR monitors metrics like CPU load, memory usage, response times, error rates, and active connections, adjusting weights dynamically to reflect current conditions. If a server shows high latency or starts generating errors, its weight is reduced, limiting the traffic it receives.

This approach helps shift traffic away from servers that are struggling - whether due to issues like garbage collection pauses or noisy neighbor problems - and toward those with more capacity. Rashan Dixon from DevX emphasizes this distinction:

"Weighted round robin is a planning tool, not a feedback mechanism".

DRR's adaptability makes it effective in environments with fluctuating workloads. As M. Rakhimov and colleagues explain:

"DWRR dynamically adjusts the weight of each server based on its current performance metrics, such as CPU load, memory usage, or response time".

Additionally, DRR can lower server weights in proportion to error rates, preventing failing nodes from being overwhelmed. However, this dynamic nature comes with increased overhead due to the need for constant monitoring. To avoid sidelining servers entirely, many systems implement safeguards like minimum weight thresholds (e.g., a floor of 1) to give struggling servers a chance to recover and rebuild their cache.

Round Robin vs Weighted Round Robin Explained

Comparing Round Robin Variants

Round Robin Load Balancing Algorithms Comparison: Features, Performance & Use Cases

Comparison Table

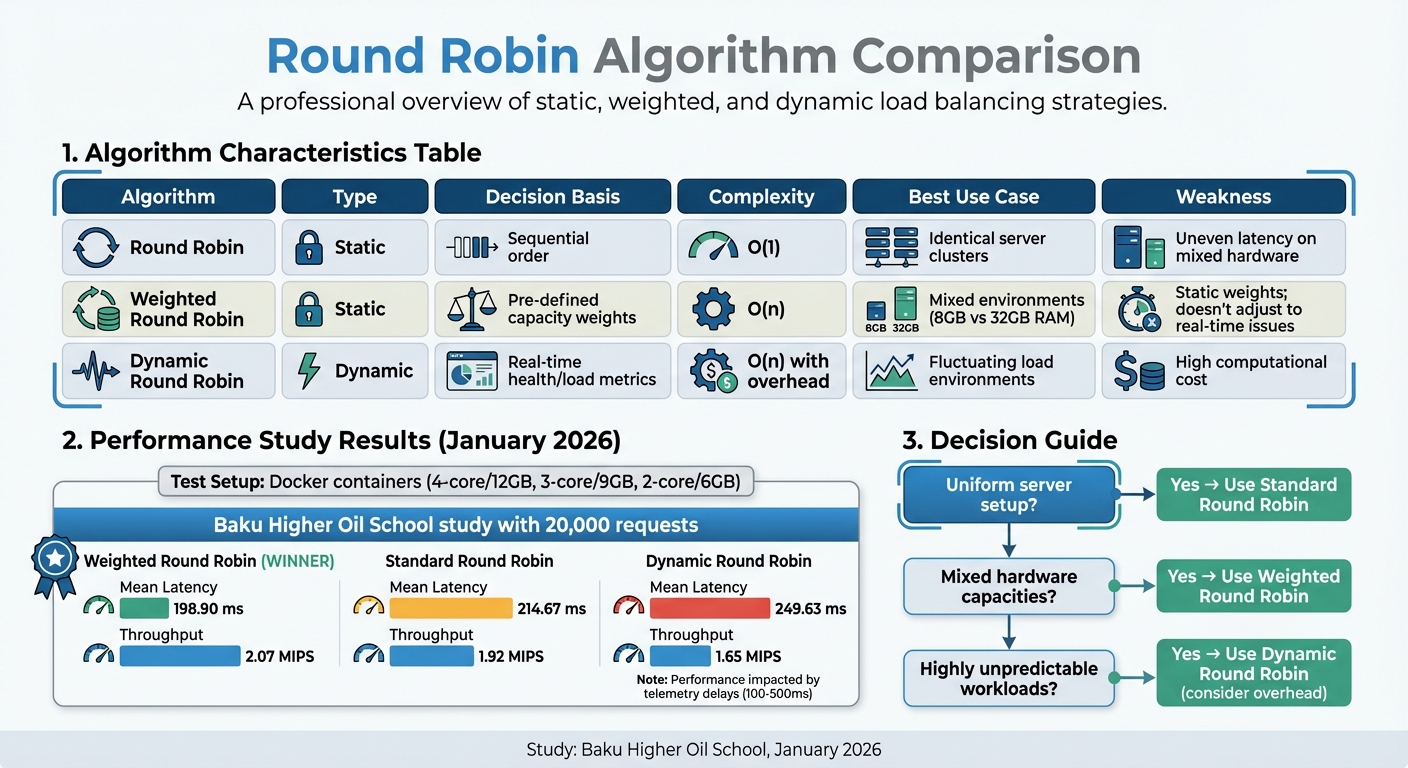

To give a clearer picture of how different Round Robin variants stack up, here's a comparison of their key aspects:

| Algorithm | Type | Decision Basis | Complexity | Best Use Case | Weakness |

|---|---|---|---|---|---|

| Round Robin | Static | Sequential order | O(1) | Identical server clusters | Can result in uneven latency on mixed hardware |

| Weighted Round Robin | Static | Pre-defined capacity weights | O(n) | Mixed environments (e.g., 8 GB vs 32 GB RAM) | Static weights; doesn't adjust to real-time issues |

| Dynamic Round Robin | Dynamic | Real-time health/load metrics | O(n) with overhead | Fluctuating load environments | High computational cost for the load balancer |

Here's what this means in practice:

- Standard Round Robin: It's simple and efficient but doesn't adapt to feedback, making it less suited for environments with varying hardware.

- Weighted Round Robin: Works well for setups with mixed hardware capabilities but remains static, unable to adjust to real-time conditions.

- Dynamic Round Robin: Offers the highest precision by factoring in real-time metrics but comes with increased computational demands.

In January 2026, researchers from the Baku Higher Oil School conducted a study using Docker containers with varying resources (4-core/12 GB, 3-core/9 GB, and 2-core/6 GB). They processed 20,000 requests and found that Weighted Round Robin delivered the best results, achieving a mean latency of 198.90 ms and throughput of 2.07 MIPS. In comparison, Standard Round Robin had a mean latency of 214.67 ms and throughput of 1.92 MIPS, while the Dynamic approach recorded a mean latency of 249.63 ms and throughput of 1.65 MIPS. The dynamic method's performance was impacted by telemetry update delays, which ranged from 100 ms to 500 ms.

Key takeaway: Opt for Standard Round Robin in uniform setups, Weighted Round Robin for environments with mixed hardware, and Dynamic Round Robin only in cases where workloads are highly unpredictable, keeping in mind its additional overhead.

Where Round Robin is Used

Web Servers and Cloud Hosting

Round Robin algorithms play a key role in managing traffic across web servers and cloud hosting platforms. They distribute HTTP requests evenly among backend servers, ensuring no single server gets overwhelmed. A great example is NGINX Plus, which powers over 350 million websites worldwide. This approach isn't just limited to web servers - it also thrives in cloud hosting environments.

Major platforms like Dropbox, Netflix, and Zynga rely on NGINX-based load balancing to handle their massive traffic demands. NGINX Plus adds another layer of reliability by rerouting requests to a different server if one fails or returns an error. It even temporarily bypasses the failing server, keeping the system running smoothly.

DNS-based Round Robin takes this concept further by rotating multiple IP addresses (A records) for a domain, enabling global traffic distribution. This technique supports Content Delivery Networks (CDNs) like Akamai and Cloudflare, while orchestration tools such as Kubernetes use it for container-level service discovery. In environments where virtual machines vary in capacity, Weighted Round Robin ensures that higher-capacity nodes handle more traffic, optimizing overall performance.

These strategies are also critical for managing the heavy computational demands of AI workloads.

AI Workload Distribution

In AI environments, where clusters often include a mix of high-performance GPUs like NVIDIA H100s and older models like A100s, Weighted Round Robin ensures efficient resource allocation by assigning more tasks to the more powerful units. Variants like "Smooth" Weighted Round Robin further refine this process, interleaving requests (e.g., A, B, A, C) to avoid overloading a single GPU.

A compelling example comes from a study by Samah Rahamneh and Lina Sawalha in December 2019, where a weighted Round Robin algorithm applied to a hybrid CPU-FPGA system for Canny edge detection achieved a 4.8x speedup over CPU-only and a 2.1x speedup over FPGA-only setups.

Platforms like NanoGPT, which host multiple AI models such as ChatGPT, Deepseek, and Gemini, benefit significantly from these techniques. Weighted Round Robin allows these platforms to route more requests to high-capacity servers, reducing latency for users generating text or images while maintaining system-wide responsiveness.

"Weighted round robin lets you route more traffic to stronger servers while still giving weaker ones a fair share." - Nawaz Dhandala, Author, OneUptime

Dynamic Round Robin takes it a step further by adjusting weights in real-time based on metrics like response times or error rates, ensuring smooth performance during heavy AI inference workloads. Advanced versions, such as Priority Weighted Round Robin, can prioritize tasks - like handling real-time user queries over background processes - before assigning them to available GPUs or virtual machines.

These applications highlight how Round Robin algorithms adapt to diverse, high-demand scenarios, ensuring efficiency and balance across various systems.

Conclusion

Key Takeaways

Round Robin algorithms play a key role in dynamic load balancing, valued for their simplicity, speed, and even-handed distribution of traffic. The basic Round Robin method, which makes routing decisions almost instantly, has historically been a go-to solution for engineers at companies like Netflix and Amazon during their early growth stages.

What truly sets Round Robin apart, however, are its variations. Weighted and Dynamic Round Robin build on the core algorithm, allowing for proportional traffic distribution and real-time adjustments, respectively. For production environments, Smooth Weighted Round Robin is especially useful, as it prevents sudden traffic spikes by interleaving requests more evenly. While its stateless nature simplifies deployment, basic Round Robin does have limitations - it assumes all servers are equally capable and doesn’t account for server load. Pairing it with health checks is crucial to ensure reliability.

These insights provide a roadmap for selecting the most suitable Round Robin variant based on specific needs.

Final Thoughts

Choosing the right algorithm is more than just a technical decision - it’s a strategic one. For environments with uniform servers handling similar workloads, basic Round Robin offers speed and low overhead. In setups with varied server capacities, Weighted Round Robin ensures resources are utilized effectively by matching weights to server capabilities. For dynamic or unpredictable traffic, such as AI workloads, Dynamic Round Robin adjusts on the fly to maintain performance.

"The algorithm is not a detail, it is a policy decision that shapes system behavior under stress." - Theo Schlossnagle, CEO, Circonus

Ultimately, Round Robin isn’t just about spreading requests across servers - it’s about aligning your choice of algorithm with the unique demands of your infrastructure and traffic patterns. Whether you’re managing traditional web servers, orchestrating containerized applications with Kubernetes, or running AI models on platforms like NanoGPT (https://nano-gpt.com), selecting the right variant can mean the difference between seamless operations and critical failures during peak loads.

FAQs

When should I choose Weighted Round Robin over Round Robin?

Choose Weighted Round Robin if your servers have different processing capabilities or specifications. This method allocates traffic based on assigned weights, ensuring that each server handles a load proportional to its capacity. It helps maintain smooth performance and avoids overloading weaker servers.

What metrics does Dynamic Round Robin need to work well?

Dynamic Round Robin uses key metrics such as server capacity and performance characteristics - including CPU usage, memory availability, and current server load. By factoring in these details, it ensures requests are distributed efficiently and in proportion to each server's capabilities.

How do health checks affect Round Robin reliability?

Health checks play a key role in improving the reliability of Round Robin load balancing. They ensure that only servers in good condition handle incoming traffic. Without these checks, requests could end up being routed to servers that are down or overloaded, leading to errors and a frustrating experience for users. By automatically removing servers that fail health checks, the system ensures steady service availability and can handle faults effectively - an absolute must in high-traffic scenarios.