Global AI Regulations: 5 Key Frameworks Compared

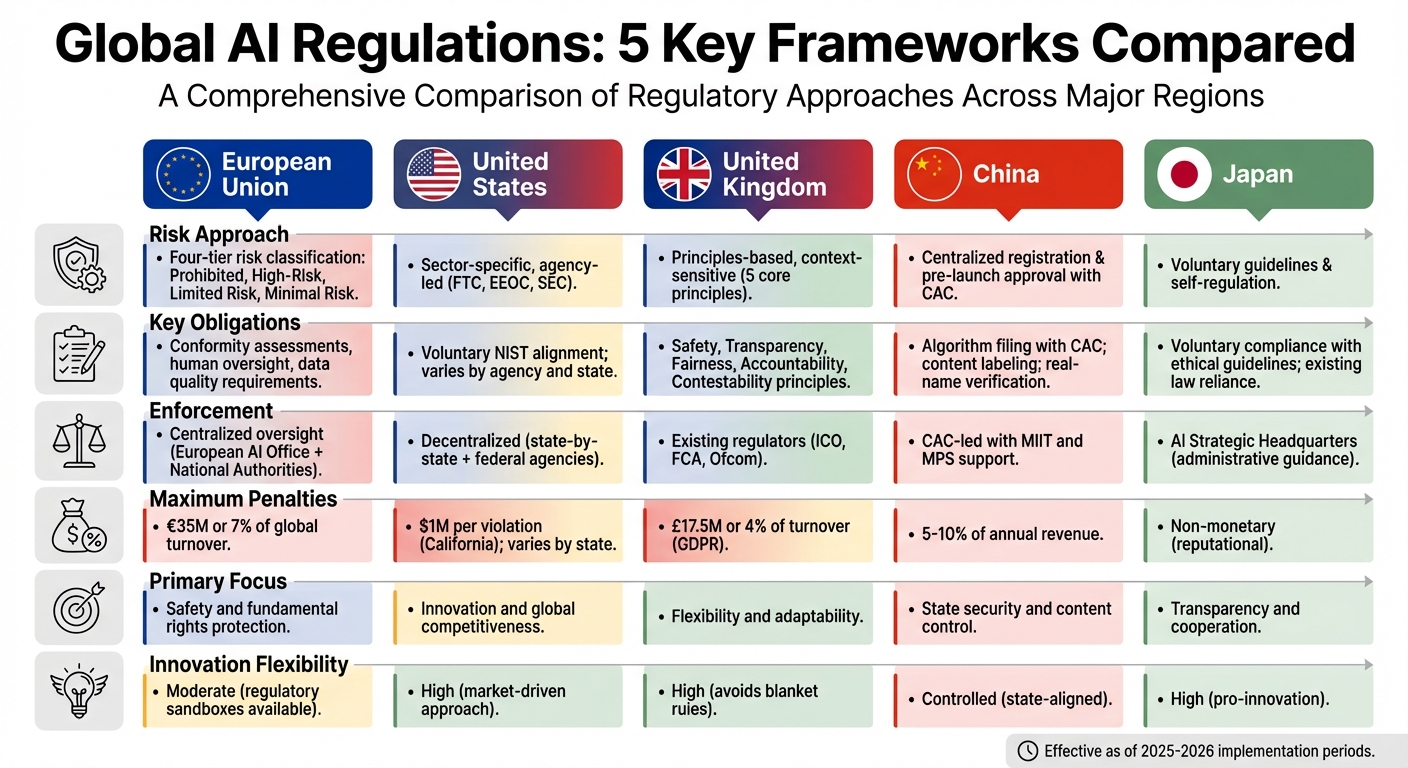

Artificial intelligence is reshaping industries worldwide, but its rapid growth has sparked concerns about fairness, privacy, and accountability. Governments are responding with distinct regulatory approaches. Here's a quick summary of the five major frameworks:

- European Union (EU): Risk-based regulation with heavy penalties (up to €35M or 7% of global turnover). Emphasizes strict compliance for high-risk AI systems like hiring and law enforcement tools.

- United States (US): Sector-specific rules led by agencies like the FTC. Focuses on innovation with voluntary standards like NIST but varies significantly by state.

- United Kingdom (UK): Principles-driven framework applied by existing regulators. Prioritizes flexibility with no overarching AI-specific law yet.

- China: Centralized control requiring algorithm registration and pre-launch approval. Prioritizes state security and mandates content alignment with national values.

- Japan: Encourages voluntary compliance through ethical guidelines and existing laws. Avoids strict penalties, focusing on cooperation and transparency.

Quick Comparison:

| Region | Risk Approach | Enforcement | Penalties | Focus |

|---|---|---|---|---|

| EU | Four-tier risk classification | Centralized oversight | Up to €35M or 7% of turnover | Safety and rights protection |

| US | Sector-specific, agency-led | Decentralized | Varies by state (e.g., $1M max) | Innovation and competition |

| UK | Principles-based | Existing regulators | GDPR fines (up to £17.5M) | Flexibility and adaptability |

| China | Centralized, pre-launch approval | CAC-led | Up to 5-10% of revenue | State security and control |

| Japan | Voluntary, self-regulation | Administrative guidance | Non-monetary | Transparency and cooperation |

For companies, compliance isn't optional - it's a competitive advantage. Aligning with these frameworks requires proactive planning, clear documentation, and flexible system design to meet varying global standards.

Global AI Regulations Comparison: EU, US, UK, China, and Japan Frameworks

Understanding AI Regulations Across the World | Experts Share All

sbb-itb-903b5f2

European Union AI Act

The EU AI Act, set to take effect on August 1, 2024, establishes a legal framework for AI with a global reach. It applies to any company whose AI systems are sold in the EU market or whose outputs are used within the EU, regardless of the company’s location.

One of the Act's standout features is its tailored approach to regulation. As Oleg Prosin of WCR.LEGAL explains, "The EU AI Act's central innovation is its rejection of blanket AI regulation in favour of a graduated, risk-proportionate framework". This means the level of regulation depends on the potential harm to safety, health, and fundamental rights. The Act's structure revolves around a risk-based classification system, which directly affects compliance and enforcement strategies.

Risk-Based Classification System

The EU AI Act organizes AI systems into four risk categories:

- Unacceptable Risk: AI practices that pose clear threats, such as public authority social scoring, subliminal manipulation, or real-time biometric identification in public settings, are completely banned. These restrictions will be enforceable starting February 2, 2025.

- High-Risk AI: This category includes systems used in sensitive areas like hiring, credit scoring, medical devices, and law enforcement. These systems are allowed but must meet strict requirements, including conformity assessments, risk management protocols, detailed documentation, and human oversight. High-risk AI systems are expected to represent only 5% to 10% of future applications, with full compliance required by August 2, 2026.

- Limited Risk: AI systems such as chatbots or deepfakes face fewer obligations, mainly requiring transparency. Users must be informed when they’re interacting with an AI.

- Minimal Risk: Applications like spam filters and video games fall into this category, which doesn’t impose mandatory requirements. These currently make up around 80% of AI use in the EU.

Enforcement and Penalties

Enforcement is divided between the European AI Office, which oversees general-purpose AI, and National Market Surveillance Authorities, which handle compliance within individual member states. These authorities are empowered to conduct audits, inspect facilities, and even review source code to ensure adherence to the rules.

The penalties for non-compliance are steep. Violations of prohibited practices can lead to fines of up to €35 million (approximately $38 million) or 7% of global annual turnover, whichever is higher. Non-compliance with high-risk system requirements can result in fines of up to €15 million or 3% of global turnover. Providing false information to authorities can incur penalties as high as €7.5 million or 1% of global turnover. Additionally, providers of high-risk systems outside the EU must appoint an EU Authorized Representative and maintain compliance records for a decade.

United States Federal AI Governance

The United States has opted for a decentralized, sector-specific approach to AI regulation instead of implementing a single, overarching law. Unlike the European Union's centralized enforcement model, the U.S. spreads regulatory responsibilities across existing agencies like the FTC, EEOC, and SEC. This framework leans on current legal authorities, executive actions, and voluntary industry standards to guide AI governance.

Following the revocation of Biden-era safety orders in early 2025, the administration shifted its focus to maintaining global leadership and reducing regulatory hurdles rather than imposing strict mandates. The guiding principle? Regulation should encourage innovation rather than stifle it, especially in the face of competition from countries like China.

Sector-Specific Regulations

Federal oversight relies on adapting existing consumer protection and civil rights laws to address AI-related concerns. For example, the FTC enforces Section 5 of the FTC Act to tackle "deceptive practices" in AI systems, while the EEOC applies Title VII to combat algorithmic discrimination in hiring.

The NIST AI Risk Management Framework (AI RMF 1.0) plays a central role in shaping U.S. AI governance, even though its adoption is technically voluntary. States like Colorado have turned this framework into a legal standard by offering a "safe harbor" to companies that demonstrate compliance, effectively setting a benchmark for "reasonable care". In 2025, state legislatures introduced 1,208 AI-related bills, with 38 states passing about 100 AI-specific measures. Notable examples include California's SB 53 and Colorado's AI Act, which impose fines of up to $1 million and $20,000 per violation, respectively.

In December 2025, Executive Order 14365 created an AI Litigation Task Force to challenge state laws deemed overly restrictive or misaligned with federal deregulatory goals. The federal government has even threatened to withhold broadband grants from states enforcing stringent AI regulations. This mix of state and federal actions underscores the U.S. strategy of fostering innovation while managing regulatory diversity.

Focus on Innovation

The U.S. treats AI policy as a national security priority, integrating it into broader diplomatic efforts to maintain global dominance. This innovation-driven approach is designed to minimize compliance costs, ensuring resources are directed toward research and development. However, this flexibility has its downsides. Federal oversight tends to be reactive, addressing problems only after they arise and sometimes failing to catch biases in critical areas like hiring or lending.

A 2025 AuditBoard study revealed that just 25% of organizations had fully operational AI governance programs, while nearly 50% reported governance or ethical lapses in AI initiatives, according to a McKinsey report from the same year. Meanwhile, the AI governance market, valued at $59.2 million in 2025, is expected to grow to $354.1 million by 2033.

For AI developers and businesses, navigating this regulatory environment means juggling voluntary standards, varying state requirements, and federal efforts to override stricter state laws. This decentralized framework reflects the broader complexities of global AI governance.

As legal experts Michael Johnson and Wills Catling have observed, "The era of fragmented AI governance is ending. The era of federal AI regulation has begun".

United Kingdom AI Regulation

The United Kingdom takes a different path compared to the EU and the U.S. by opting for a principles-based, non-statutory framework to oversee AI. Instead of introducing a single AI regulator or passing sweeping legislation, the UK leans on existing sector-specific organizations - like the Information Commissioner's Office (ICO), the Financial Conduct Authority (FCA), and Ofcom - to apply five core principles to AI within their respective areas of responsibility.

This approach emphasizes flexibility, addressing AI's ability to operate with adaptivity (performing tasks not explicitly programmed) and autonomy (functioning without continuous human oversight). This adaptability helps the UK respond quickly to technological advancements without being tied to rigid definitions.

In 2024, the UK government allocated $12.3 million (roughly £10 million) to enhance AI expertise across approximately 90 regulatory bodies. By 2023, the UK had already established itself as a global leader in AI, ranking third worldwide in AI research and development. In 2021 alone, private AI investments reached $4.65 billion. Additionally, the UK hosts one-third of all AI companies in Europe - more than double the number of any other European nation. Below, we’ll explore the framework’s core principles and its connection to global standards.

Principles-Based Framework

The UK's framework is built around five guiding principles that regulators apply across sectors: Safety, Security and Robustness; Transparency and Explainability; Fairness; Accountability and Governance; and Contestability and Redress. While these principles are not legally binding yet, they provide clear expectations for AI systems' performance and behavior.

"A heavy-handed and rigid approach can stifle innovation and slow AI adoption. That is why we set out a proportionate and pro-innovation regulatory framework."

– Michelle Donelan, Secretary of State for Science, Innovation and Technology

This principles-based strategy is already being put into action. For example, in September 2025, the Financial Conduct Authority launched an "AI live testing" initiative, allowing financial firms to test AI models in real-world scenarios using synthetic data before full-scale deployment. Similarly, in April 2024, the Medicines and Healthcare products Regulatory Agency introduced the "AI Airlock" sandbox, enabling developers of AI-driven medical devices to gather evidence of safety and effectiveness under real-world conditions.

However, the regulatory landscape may evolve further. After the Labour Party's victory in the July 2024 general election, plans were announced for targeted legislation addressing the "most powerful" AI models. A formal AI Bill is anticipated in late 2026, signaling a shift toward balancing innovation with concerns about the risks posed by advanced AI systems.

Alignment with Global Standards

Although the UK’s approach differs from the EU's more prescriptive AI Act, it achieves technical alignment through the British Standards Institution (BSI) and collaborations with European organizations like CEN and CENELEC. These partnerships help establish shared technical benchmarks for robustness and traceability, even as regulatory philosophies diverge.

The UK is also deeply involved in international AI initiatives. Efforts like the Bletchley Declaration and the G7 Hiroshima Process highlight its commitment to global cooperation. In February 2025, the UK renamed its AI Safety Institute to the AI Security Institute, signaling a shift in focus toward addressing risks like cybersecurity and weaponization - areas that align closely with U.S. priorities. Later that year, in November, the UK and Singapore launched the "UK-Singapore AI-in-Finance Partnership", aimed at joint testing of AI technologies and sharing regulatory insights.

For British firms operating in the EU, dual compliance remains a challenge. Despite the UK's lighter regulatory touch, companies must still meet the EU AI Act's stricter requirements to access European markets. This dual approach reflects the UK's broader strategy: fostering domestic innovation while ensuring businesses remain competitive internationally.

China AI Oversight Regulations

China has taken a distinct approach to AI oversight, prioritizing state security and social stability above innovation or individual freedoms. Unlike the EU's focus on post-market oversight or the U.S.'s fragmented regulatory landscape, China mandates pre-launch approval for AI services. This means every algorithm must be registered with the Cyberspace Administration of China (CAC) and must pass stringent security assessments before deployment. By March 31, 2025, 346 generative AI services were registered with the CAC, and over 5,000 algorithms were listed in the national registry by November of the same year.

This "security-first" mindset also extends to content regulation. AI systems are required to align with Socialist Core Values and must avoid outputs that could threaten national security, spread misinformation, or challenge state authority. The CAC has the power to demand immediate changes or even suspend AI services if they pose risks to public opinion. Furthermore, anonymity is not an option - users must register with valid Chinese identification, ensuring real-name verification.

"To operate AI in China at scale, you must treat the government as an active co-pilot in your algorithm design, not just an external regulator."

– Pertrama Partners

For international developers, compliance with these regulations requires significant adjustments. Data localization laws mandate that personal and sensitive data collected in China be stored on domestic servers. Any cross-border data transfers must undergo CAC security reviews, which can take anywhere from 6 to 12 months. Foreign companies are often required to establish a legal entity within China to manage these processes. Noncompliance can lead to heavy penalties, including fines of up to 50 million RMB or 5% of annual turnover. Severe breaches related to content security might result in fines as high as 10% of the previous year's revenue.

State Control and Security

China’s regulatory framework reflects a centralized, state-controlled approach with targeted, application-specific rules. Instead of enacting one overarching AI law, the government uses what it calls "small-incision" regulation - creating tailored rules for specific technologies like generative AI and deepfakes, allowing quick adaptation to new developments. The CAC leads this effort, supported by the Ministry of Industry and Information Technology (MIIT) for technical standards and the Ministry of Public Security (MPS) for criminal enforcement.

By December 2023, more than 850 AI algorithms had been registered with the CAC, including those from major players like Baidu, Alibaba, and Tencent. Amendments to the Cybersecurity Law in October 2025, effective January 1, 2026, further embedded AI governance into national security legislation. A key pillar of this framework is data sovereignty: companies must store Chinese user data within the country and use CAC-approved keyword databases for real-time monitoring. These requirements present significant hurdles for global AI firms accustomed to centralized data systems.

Ethical Guidelines

China’s ethical guidelines for AI development are equally stringent, emphasizing human oversight and control. The framework is based on six core principles, which translate into 18 specific mandates covering the entire AI lifecycle. A major focus is on ensuring that humans retain full decision-making authority, including the ability to withdraw from AI interactions or shut down systems entirely. Draft rules introduced in 2025/2026 also target AI systems designed for emotional interaction, requiring providers to monitor extreme user emotions and intervene to prevent addiction.

In September 2025, China unveiled its AI Safety Governance Framework 2.0, which assigns AI systems a risk grade ranging from low to extremely serious, enabling continuous monitoring. Organizations working on high-risk AI projects in sensitive areas like healthcare or public opinion must establish internal ethics committees with at least seven members. These projects are also subject to government-led expert evaluations.

"Development and security must be balanced... technological advancement must not create systemic risks, social instability, or national security threats."

– Shihui Partners

Transparency has also become a critical focus. Starting September 1, 2025, all AI-generated content must include visible watermarks and machine-readable metadata to enable platform verification. China has set ambitious goals for AI adoption, aiming for 70% penetration in key sectors by 2027 and 90% by 2030. This approach, emphasizing state-directed governance, contrasts sharply with the EU's risk-based model and the UK's principles-driven framework, showcasing China's preference for centralized control over market-driven innovation.

Japan AI Act

Japan has taken a distinct path in AI regulation compared to Europe, the US, and China. Through the Act on Promotion of Research and Development, and Utilization of AI-related Technology, enacted in May 2025 and effective September 1, 2025, Japan has chosen to emphasize high-level principles rather than enforce rigid rules. This law avoids financial penalties, relying instead on existing legal frameworks like the Act on the Protection of Personal Information (APPI) and the Copyright Act to address issues as they arise.

At the heart of Japan’s approach is the concept of "Agile Governance" - a dynamic model that adapts to technological changes without locking industries into strict regulations. Unlike the EU’s preemptive risk classifications or China’s pre-launch approval systems, Japan handles risks after deployment, using its existing laws to address problems. This innovation-friendly strategy reflects Japan’s economic priorities, particularly in light of its record digital trade deficit of 6.6 trillion yen in 2024 and its global ranking of 12th in private AI investment.

Transparency and Cooperation

Transparency is a cornerstone of the Japan AI Act, codified as one of its four core principles (Article 3) to safeguard against misuse and uphold citizens’ rights. Instead of fines, the Act encourages transparency and voluntary cooperation through administrative guidance and reputational incentives. This contrasts sharply with the EU’s hefty fines, which can reach up to 7% of global turnover.

"The Act is specifically designed to create an environment that encourages investment and experimentation by deliberately avoiding the imposition of stringent rules or penalties that could stifle development." – Dominic Paulger, Deputy Director for Asia-Pacific, FPF

To coordinate AI policy, the government launched the AI Strategic Headquarters on September 1, 2025, under the leadership of the Prime Minister. This body oversees the Basic AI Plan and commissions studies on AI risks, such as the societal effects of deepfake pornography and the use of AI in hiring practices.

Japan’s cooperative model is evident in enforcement actions. For example, in 2025, the government formally requested OpenAI to avoid copyright infringement after its Sora 2 text-to-video model generated content resembling copyrighted anime and video game characters. Similarly, when Yomiuri Shimbun sued the AI firm Perplexity for alleged unauthorized content collection, it highlighted how existing copyright laws remain effective enforcement tools, even though the AI Act itself is non-punitive.

Reliance on Existing Laws

Japan’s AI governance leans heavily on existing laws to address post-deployment risks. The Act on the Protection of Personal Information (APPI) tackles data misuse, with corporate fines reaching up to 100 million yen for violations. The Copyright Act handles issues related to training data and AI-generated outputs, while Consumer Protection Laws address misleading AI-generated content.

This framework reflects a co-regulatory approach, where sector-specific ministries provide tailored guidance rather than imposing blanket rules. For industries like healthcare or finance, which operate in high-risk environments, compliance with stricter, ministry-led regulations is required alongside adherence to the broader AI Promotion Act.

"Japan's approach and the EU's risk-first regulation may be more complementary than contradictory, each offering valuable insights for the evolving global AI governance ecosystem." – Bird & Bird

To further support industry norms, the government has issued "living documents" like the AI Guidelines for Business, first consolidated in 2024 and updated in 2025. These guidelines help developers meet transparency requirements through internal documentation and bias-testing, avoiding the rigid assessments mandated by the EU AI Act. This flexible, co-regulatory system adds a unique dimension to global AI governance.

Comparison Table

When it comes to regulating artificial intelligence, the world's major frameworks take some very different approaches. The EU AI Act stands out with its strict, all-encompassing legal framework. It uses a four-tier risk classification system and imposes pre-market requirements. In contrast, the United States opts for a fragmented, sector-specific strategy where agencies like the FTC, FDA, and EEOC oversee AI within their respective domains. The UK takes a more hands-off approach, avoiding AI-specific laws and instead asking existing regulators to apply five guiding principles - safety, transparency, fairness, accountability, and redress - based on the context. China focuses on centralized control, requiring algorithms to be registered with the Cyberspace Administration of China (CAC) and emphasizing state security and content moderation. Meanwhile, Japan relies on voluntary, non-binding guidelines, encouraging self-regulation by the private sector alongside existing copyright and data protection laws.

Enforcement also varies widely. The EU enforces its rules with fines of up to €35 million or 7% of global annual turnover for violations. China's penalties can reach 5% of annual revenue and may include operational sanctions like business suspension or license revocation. In the UK, regulators such as the ICO can impose fines of up to £17.5 million or 4% of global annual turnover, as outlined under GDPR. The United States takes a state-specific approach - for instance, California fines up to $1 million per violation, while Texas caps penalties at $200,000 per violation. Japan, however, takes a softer stance, relying on administrative guidance and reputational incentives rather than monetary penalties.

| Dimension | EU AI Act | US Federal Governance | UK AI Regulation | China AI Oversight | Japan AI Act |

|---|---|---|---|---|---|

| Risk Approach | Four-tier classification (Prohibited to Minimal) | Sector-specific & agency-led | Principles-based & context-sensitive | Centralized registration & security-focused | Voluntary guidelines & self-regulation |

| Key Obligations | Conformity assessments, human oversight, data quality | Voluntary NIST alignment; agency-specific rules | Adherence to five cross-sectoral principles | Algorithm filing with CAC; content labeling | Voluntary compliance with ethical guidelines |

| Penalties/Enforcement | Up to €35M or 7% of global turnover | $1M per violation (CA); varies by state | Up to £17.5M or 4% of turnover (GDPR) | Up to 5% of annual revenue | Primarily non-monetary |

| Innovation Flexibility | Moderate (regulatory sandboxes available) | High (market-driven; EO 14179 removes barriers) | High (avoids blanket rules) | Controlled (state-aligned) | High (pro-innovation) |

| Global Impact | High ("Brussels Effect" sets global baseline) | Moderate (influences through market power) | Moderate (model for flexible governance) | Moderate (influences regional standards) | Low/Moderate (focus on the private sector) |

These regulatory differences significantly impact how multinational companies approach compliance and innovation. The "Brussels Effect" is particularly influential, as many global firms adopt the EU AI Act as their baseline for compliance. This allows them to streamline governance across multiple regions while maintaining access to the EU market - even in areas with less stringent rules.

"Documentation is the universal currency of AI compliance: without clear records of design, testing, and oversight, regulators will assume you did nothing".

Such varied frameworks highlight the challenges and opportunities AI developers face when navigating these regulatory landscapes.

What This Means for AI Developers and Deployers

Navigating fragmented regulations means compliance needs to be baked into the very foundation of your AI systems. Many multinational companies rely on the EU's regulatory baseline as a guide, ensuring their systems meet requirements for risk management, documentation, and oversight across various jurisdictions.

To handle the complexity of global regulations, developers should design systems with built-in compliance layers. These layers can adapt to regional requirements without requiring a complete system overhaul. For example, you might include content moderation filters tailored for China or opt-out notices specific to California. This approach ensures compliance is part of the product’s architecture from the start rather than an afterthought.

Clear documentation is now a cornerstone of AI compliance. Without detailed records of design decisions, testing processes, and oversight protocols, regulators may assume non-compliance. To stay ahead, maintain a machine-readable inventory that links features to model versions, risk levels, and applicable jurisdictions. Tools like model cards and impact assessments are becoming essential. Using the EU’s rigorous standards as a guide can help streamline these efforts.

Compliance is no longer just a legal box to check - it’s becoming a selling point. Enterprise and government clients are increasingly including AI governance in their procurement requirements. Companies with strong compliance frameworks are more likely to win contracts, while those neglecting it could lose out on market opportunities. A proactive approach not only reduces legal risks but also boosts competitiveness.

If you're unsure where to start, consider a 90-day plan. Begin by inventorying your AI features in the first 30 days. Next, embed control mechanisms like CI/CD release gates between Days 31 and 60. Finally, spend Days 61 to 90 setting up a system to monitor regulatory changes. AI laws are evolving rapidly - take the EU AI Act, which rolls out in stages through 2027, or the fact that all 50 U.S. states introduced AI legislation in 2025. Your systems need to stay flexible as these regulations continue to shift.

FAQs

How do I know if my AI system is “high-risk” under the EU AI Act?

To figure out if your AI system is considered "high-risk" under the EU AI Act, you'll need to check the categories listed in Annex I or Annex III. These sections focus on the system's intended use and the potential risks it might pose. For systems under Annex III, some might not be classified as high-risk if they can demonstrate minimal potential for harm. However, this requires proper documentation and reporting to comply with the Act's requirements.

If I operate in multiple countries, which AI regulation should I follow first?

If your business spans multiple countries, it’s wise to focus on AI regulations with the most extensive reach and impact - like the EU AI Act. This regulation stands out because of its extraterritorial scope and risk-based structure, setting a global standard for safety, transparency, and accountability. By aligning with the EU framework, you can streamline compliance efforts across various regions, even as nations like the US and China adopt more localized or industry-specific rules.

What compliance documents should I prepare to satisfy most regulators?

To comply with regulations, it's crucial to create thorough compliance documents. These should include risk and impact assessments, safety and fairness testing records, and detailed materials like model cards and transparency reports. Such documentation reflects global standards focusing on risk management, transparency, and human oversight. This step is especially critical in areas like the EU, where failing to meet compliance requirements can result in hefty penalties.