AI Compliance vs. Innovation: Striking a Balance

Embedding compliance into AI development turns regulatory constraints into a competitive advantage for scalable, trustworthy innovation.

Updates, guides, and insights from the NanoGPT team

Showing

Embedding compliance into AI development turns regulatory constraints into a competitive advantage for scalable, trustworthy innovation.

Compare masking and tokenization across reversibility, compliance, performance, cost, and use cases to pick the right data protection for production or non‑production.

Compare GANs and Transformers for image generation: when to use GANs for photorealism, Transformers for context-aware tasks, and when hybrid models help.

Five-step guide to Bayesian hyperparameter tuning: define search space, choose surrogate and acquisition strategies, run optimization, validate, deploy.

Explore how feature attribution enhances transparency in AI image generation, boosts user trust, and meets regulatory standards.

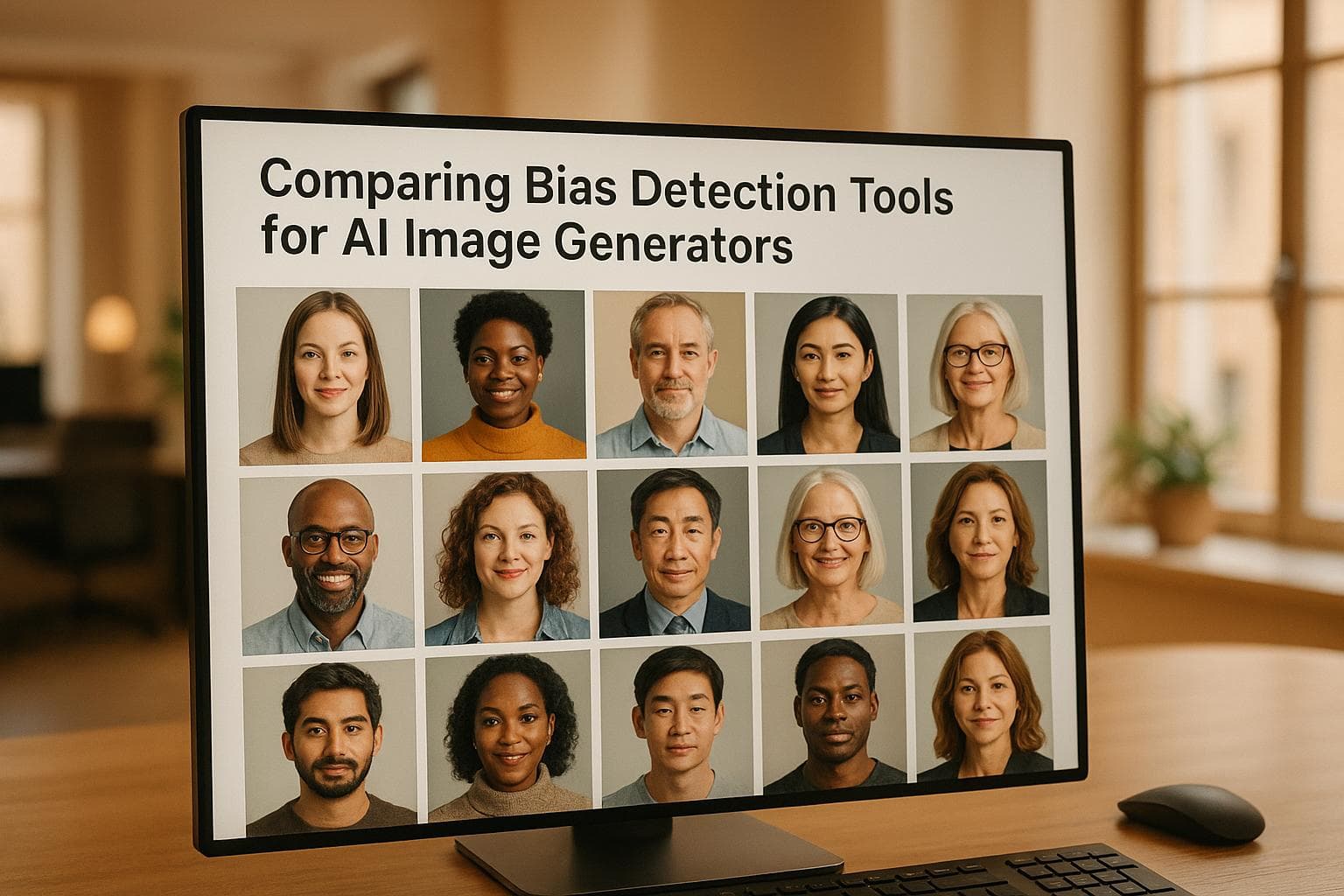

Explore essential bias detection tools for AI image generators, highlighting their unique features, strengths, and use cases.

Explore how Vision-Language Models combine images and text for tasks like captioning and question answering, and their impact across various industries.

Learn how to successfully implement neural style transfer in your projects with this comprehensive checklist covering methods, tools, and best practices.

Explore the impact of latency on multi-region AI deployments and discover strategies to enhance performance and user experience.

Learn how customizable churn prediction tools can help subscription-based businesses reduce customer loss and boost retention effectively.