OAuth vs OpenID Connect in AI Platforms

Compare OAuth 2.0 and OpenID Connect for AI platforms: OAuth handles authorization; OIDC provides authentication for secure agents and APIs.

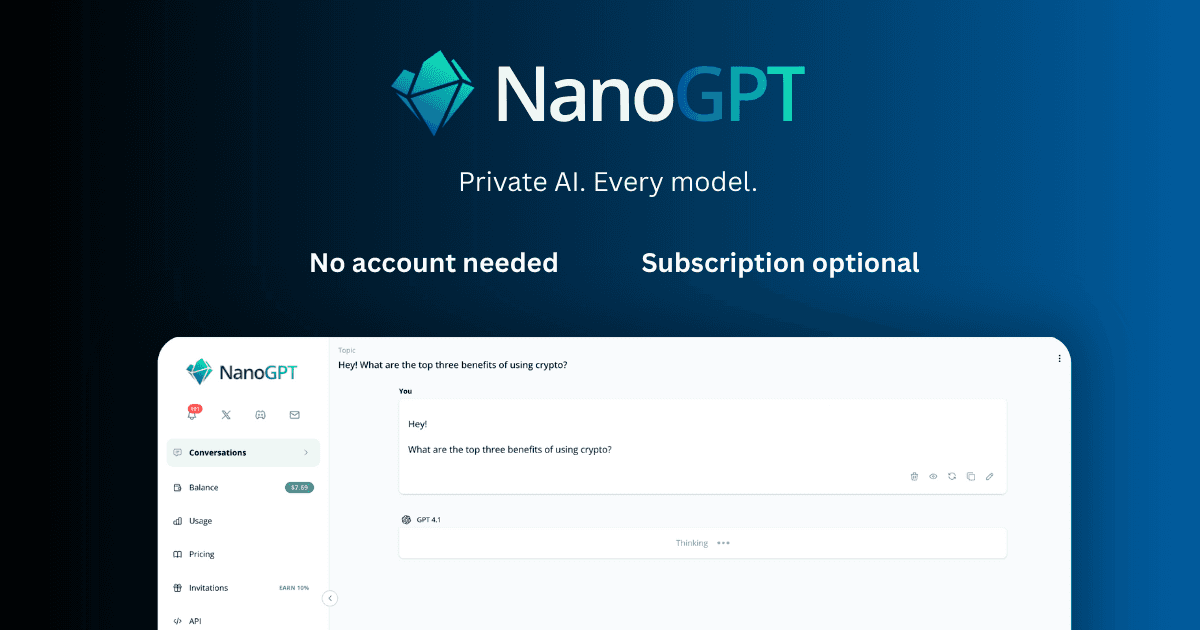

Updates, guides, and insights from the NanoGPT team

Showing

Compare OAuth 2.0 and OpenID Connect for AI platforms: OAuth handles authorization; OIDC provides authentication for secure agents and APIs.

Best practices for session tokens: short-lived access tokens, refresh rotation, CAE, and meeting NIST/PCI compliance.

Overview of major OOD benchmarks, failure modes, and methods to improve robustness across vision, time-series, and sensor models.

One AI fuels creative lesson design; the other streamlines research and Google Workspace workflows.

AI detects scratches, inpaints missing areas, restores color and sharpness, and delivers fast, low-cost photo restoration.

Step-by-step guide to connect Risuai to NanoGPT, choose models, and configure pay-as-you-go or subscription mode.

Burning through your AI balance faster than expected? Learn practical tips to cut costs on NanoGPT — from choosing the right model to managing conversation context, using the subscription, and avoiding common money traps.

Analysis of cryptocurrency payment distribution for March 2026 (crypto-only deposits)

Looking for an AI subscription alternative in 2026? Learn how to use 400+ AI models for chat, image, and video in one place without juggling multiple paid plans.

Compare every way to access Claude models — Claude Pro, API direct, and NanoGPT. See real costs per conversation and find the cheapest option for your usage level.