Efficient Context Expansion: Techniques Compared

Compare six long-context methods for LLMs: positional scaling, sparse attention, compression, cross-attention, and memory systems for efficient context expansion.

Updates, guides, and insights from the NanoGPT team

Showing

Compare six long-context methods for LLMs: positional scaling, sparse attention, compression, cross-attention, and memory systems for efficient context expansion.

Practical best practices to optimize, secure, and deploy proprietary AI frameworks on constrained edge devices.

Stream AI-generated text with SSE, prompt caching, and live data to deliver fast, personalized website content.

Monitor, automate error handling, version models, optimize resources, and protect data to keep hybrid AI workflows reliable.

Focused dashboards that track engagement, efficiency, and costs are the difference between wasted AI spend and measurable business impact.

Pin packages, models, and Docker images to ensure reproducible, secure AI deployments—commit lockfiles, verify hashes, and scan for vulnerabilities.

Compare refresh-token rotation and revocation for JWTs: benefits, trade-offs, performance impacts, and implementation tips (cookies, Redis, token versioning).

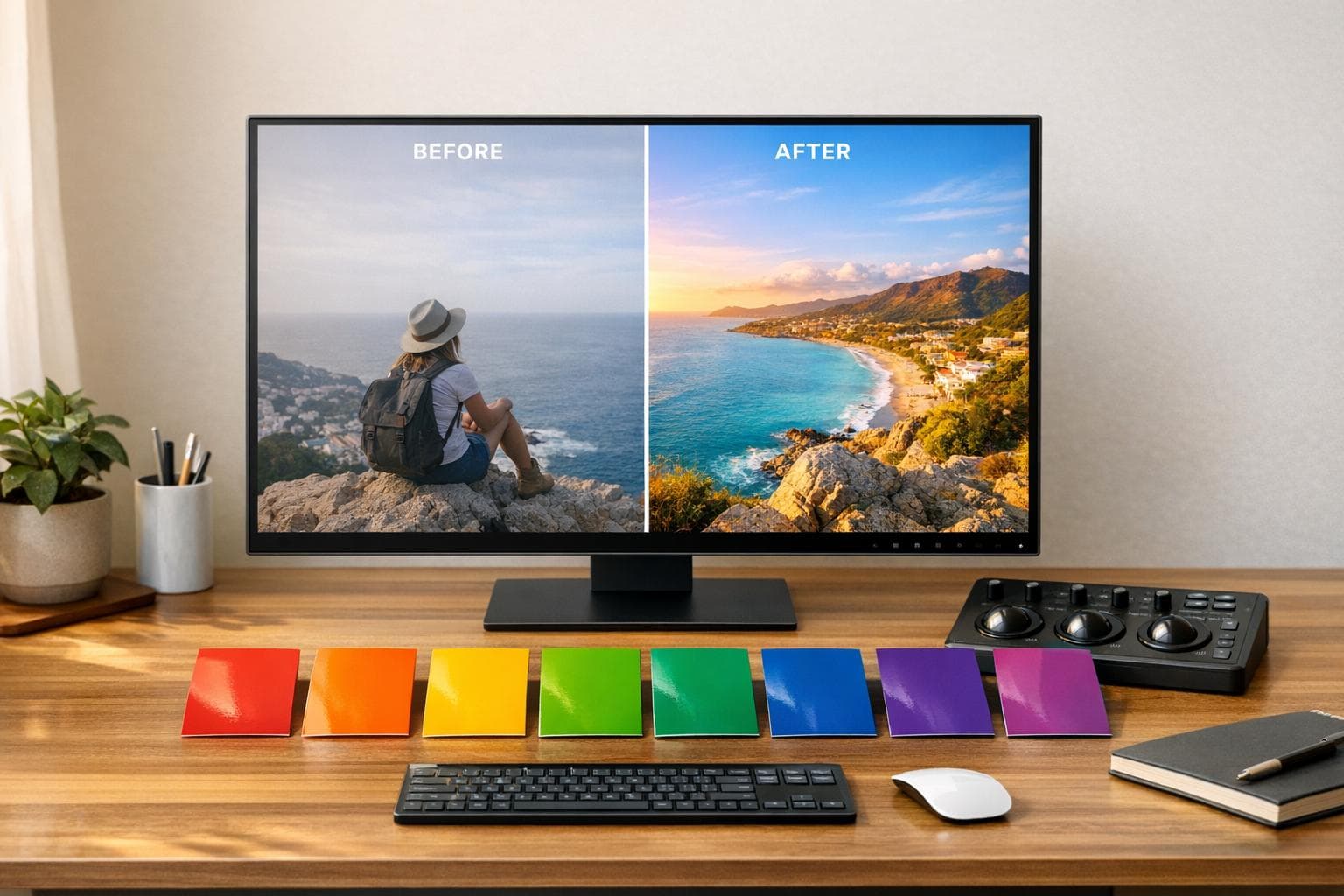

Compare seven AI color correction tools for photo and video — features, pricing, platforms, and best use cases to find the right fit.

Compare five major AI frameworks on risk approach, enforcement, penalties, and what developers must do to stay compliant across global markets.

How AI is transforming data observability in 2026: real-time anomaly detection, predictive analytics, unified platforms, autonomous agents, and cost-control strategies.