Scaling Depth vs. Width: Cost Trade-Offs

Wider models win for throughput; deeper models win for reasoning — the right mix, not raw size, controls AI cost, latency, and performance.

Updates, guides, and insights from the NanoGPT team

Showing

Wider models win for throughput; deeper models win for reasoning — the right mix, not raw size, controls AI cost, latency, and performance.

Treat multimodal pipelines as first-class systems: modularize by modality, partition and shard data, autoscale components, and reduce wasted compute.

Compare five scalable churn prediction tools — features, AI models, integrations, and pricing to match small teams through large enterprises.

Choose performance and scalability or simplicity when parsing API JSON in Java—streaming, memory, and framework integration determine the best fit.

Practical strategies to benchmark multimodal pipelines: hardware choices, monitoring, modality-aware scheduling, memory control, and cost-saving tips.

Ensure AI produces consistent outputs across AWS, Azure and Google Cloud using Kubernetes, IaC, centralized monitoring, drift detection, and cost controls.

AI-driven allocation is essential: it predicts workloads and offloads tasks across edge and cloud to cut latency, save energy, and improve efficiency.

Use RFC 9457 Problem Details, accurate HTTP status codes, actionable messages, and centralized middleware to make API errors consistent, secure, and machine-readable.

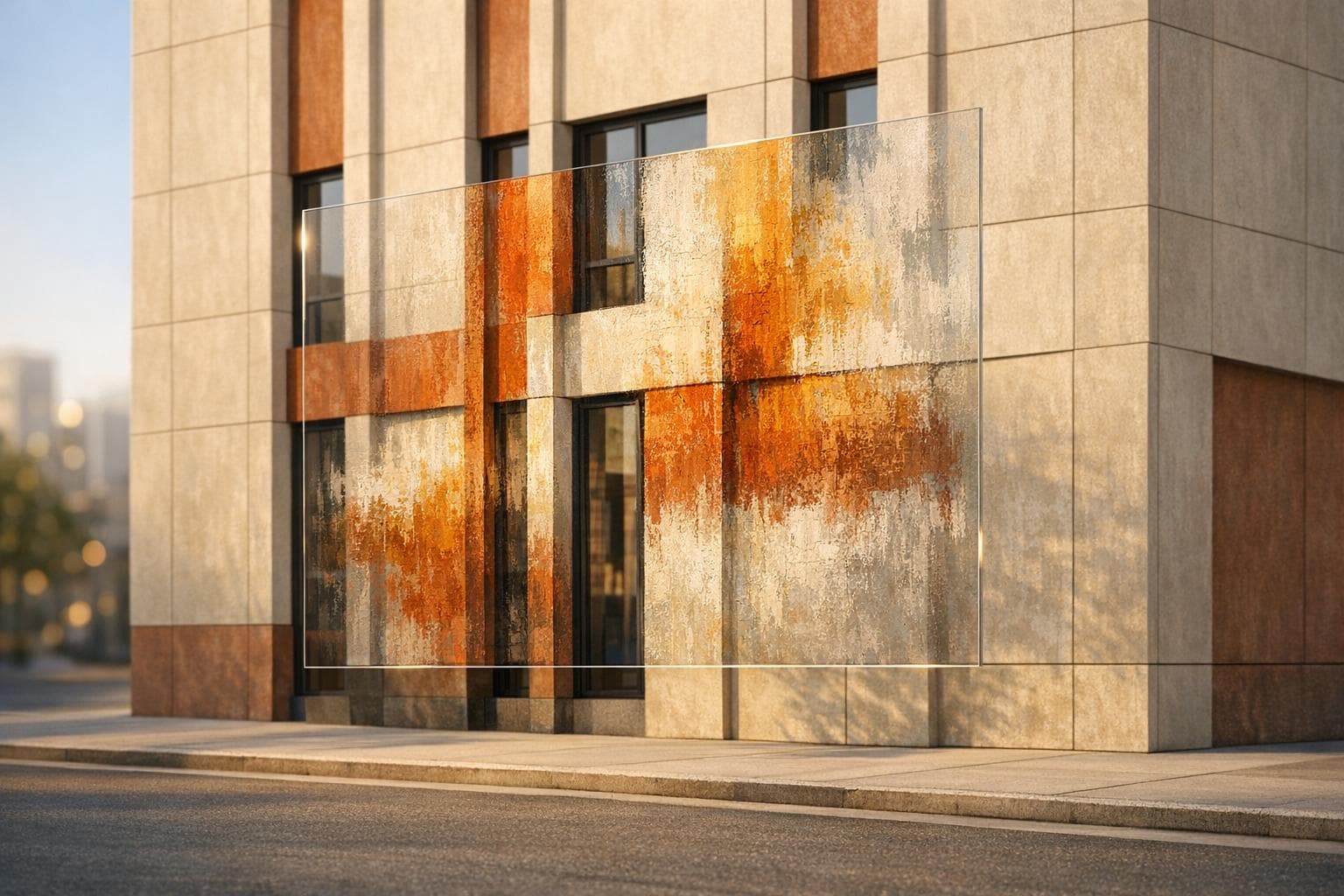

AI style transfer fuses one image’s structure with another’s textures to create photorealistic or artistic results using CNNs, GANs, and diffusion models.

Step-by-step guide to estimating AI image API expenses — per-image vs token pricing, subscriptions, batch savings, hidden fees, and example cost calculations.