Supervised Learning for Churn: A Beginner's Guide

Guide to building supervised churn models: collect and clean data, engineer features, train Logistic/RandomForest/XGBoost, and evaluate with recall and F1.

Updates, guides, and insights from the NanoGPT team

Showing

Guide to building supervised churn models: collect and clean data, engineer features, train Logistic/RandomForest/XGBoost, and evaluate with recall and F1.

Layer IP reputation, behavioral analysis, rate limits, TLS fingerprints, and CAPTCHAs to detect and block malicious bots targeting your APIs.

Practical guidelines for testing AI models: define objectives, build golden datasets, run edge-case and adversarial tests, version control, and monitor drift.

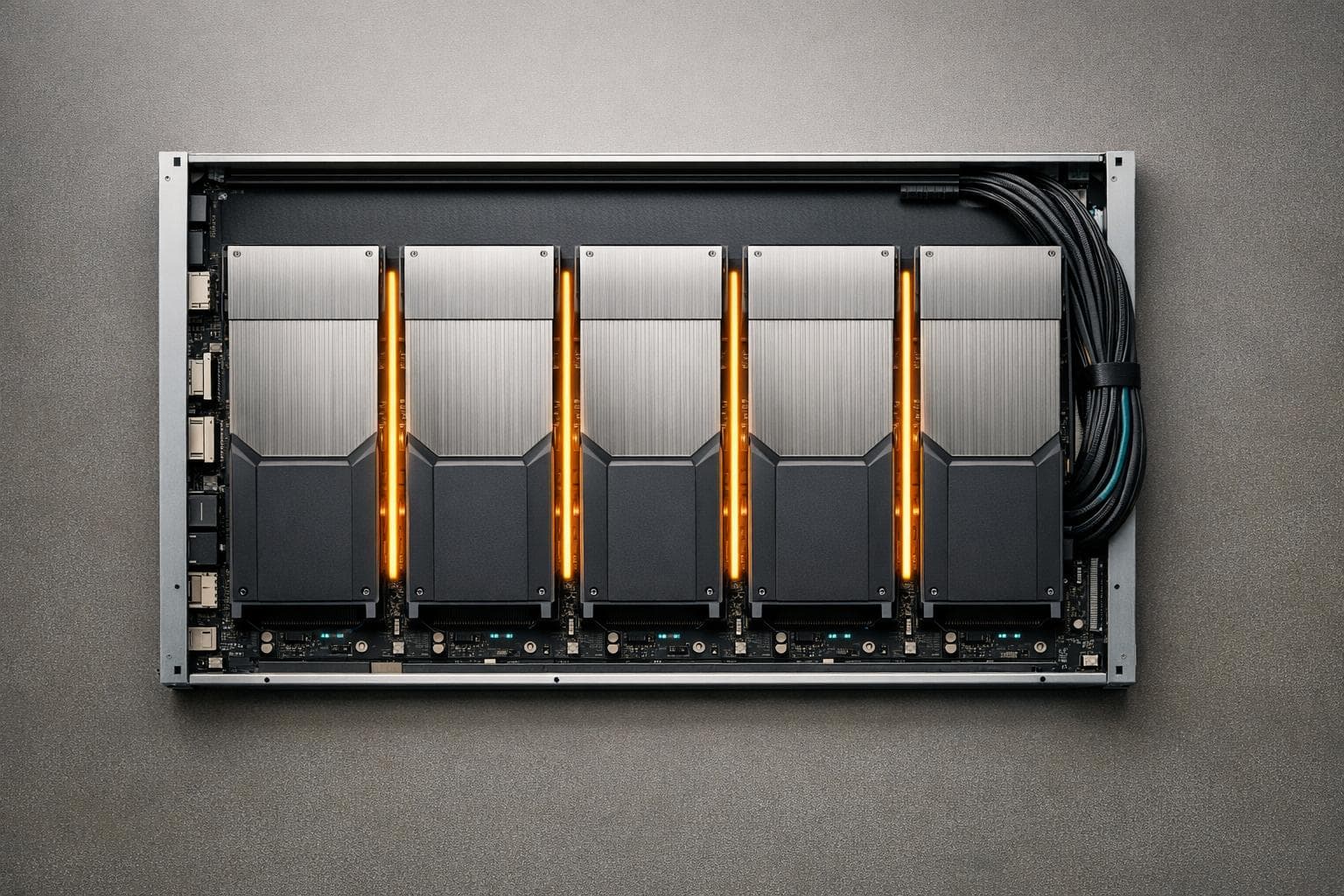

Practical guide to AI storage: VRAM/RAM sizing, NVMe vs HDD, checkpoints, object storage, and caching strategies to prevent GPU stalls and cut costs.

Compare redundancy and high availability for AI infrastructure — tradeoffs in cost, recovery time, and how combining them improves resilience.

Guide to profiling LLM latency: measure TTFT, TPOT, and ITL; use PyTorch, Nsight, and tracing; optimize batching, quantization, and memory bandwidth.

Compare Round Robin, Weighted and Dynamic methods, their trade-offs, and the best use cases for web, cloud, and AI workload balancing.

Monitor AI models to catch silent failures—track hallucinations, data drift, latency, token costs, set alerts, and automate retraining.

Compare Vanilla RNNs, LSTMs, and GRUs—memory, speed, parameter trade-offs and best use cases for short, medium, and long sequence tasks.

Compare five multi-GPU partitioning strategies—data, model, pipeline, sharded, and fully sharded—to balance memory, communication, and scalability.